Your AI workload does not need a 70-billion-parameter model and a cloud API bill that scales with every request. For IoT sensors, offline field applications, privacy-sensitive enterprise tools, and embedded devices in rural connectivity zones, what you need is a compact, task-specific model that runs locally – fast, private, and without cloud dependency. That is exactly what small language model development companies in India build – and why leading organisations are actively sourcing these capabilities domestically.

The global SLM market is projected to expand from USD 0.93 billion in 2025 to USD 5.45 billion by 2032 at a CAGR of 28.7%, according to MarketsandMarkets – driven by surging demand for on-device AI, model compression, and privacy-first deployments in healthcare, finance, and manufacturing. India is positioned to witness the fastest growth in the Asia-Pacific region during this period. The three small language model development companies in India below have been evaluated specifically for their ability to build, fine-tune, and deploy compact models – not just integrate existing APIs.

Each company below was verified for topic-specific capability: documented SLM or on-device model work, relevant technical stack (PyTorch, NVIDIA NeMo, knowledge distillation, quantization), and confirmed India headquarters. Softlabs Group leads this list with 22+ years in custom AI and software development, deep IoT deployment experience, and the technical foundations required to architect SLMs for resource-constrained environments.

Quick Navigation

What Makes Small Language Model Development Important for Indian Businesses?

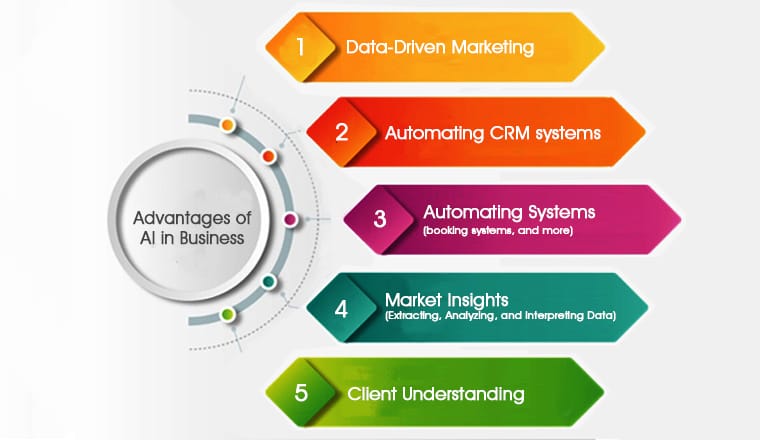

Small language model development enables Indian enterprises to deploy AI in environments where cloud-based LLMs fail – offline field operations, edge hardware, low-bandwidth rural zones, and data-sensitive regulated industries.

India’s AI policy white paper, released ahead of the India AI Impact Summit 2026 in New Delhi, explicitly backed SLMs alongside frontier models – recognising their practical value for a country with linguistic diversity, infrastructure constraints, and growing compliance requirements under the DPDP Act. The government has approved 12 players to build indigenous LLMs and SLMs, reflecting how seriously the sector is being treated at a national level.

For Indian enterprises, the business case is concrete. SLMs can be fine-tuned on proprietary data using techniques like LoRA, knowledge distillation, and model quantization – then deployed on edge devices at a fraction of the per-token cost of cloud APIs. A domain-specific SLM processing contracts or invoices costs roughly 10-15x less per document than an equivalent GPT-class API call at scale. Manufacturing facilities benefit from sub-10ms inference for quality control. Healthcare providers gain DPDP-compliant on-device processing. IoT deployments gain AI capability without cloud round-trips. Partnering with capable custom LLM development companies in India that understand both the model engineering and the deployment context accelerates time to value significantly.

Which Small Language Model Development Companies in India Build for Edge and On-Device AI?

The three small language model development companies in India below have been verified through multi-source validation: LinkedIn headcount confirmation, live proof link verification, topic-specific SLM capability assessment, and geographic HQ confirmation.

1. Softlabs Group

★ Verified ListingSLM Services and Edge AI Expertise: Softlabs Group builds custom AI systems across the full model development lifecycle – from architecture selection and training data preparation through fine-tuning, quantization, and deployment on edge or IoT hardware. Their technology stack spans Python, PyTorch, TensorFlow, LangChain, and LlamaIndex – the same tools that underpin production-grade SLM development pipelines. Their IoT development practice adds direct experience with the resource-constrained environments where SLMs live.

Softlabs’ expertise in private and on-premises AI deployment – demonstrated through their private LLM development practice – translates directly to small language model work. Both disciplines require the same foundation: secure model serving, controlled infrastructure, domain-specific fine-tuning, and deployment without cloud dependency. Their IoT deployment history – covering real-time sensor integration, edge hardware, and embedded systems across manufacturing and healthcare clients – gives them the operational context most AI-only firms lack. A team that has deployed pill-dispensing IoT devices and AI-powered inventory trackers understands the compute and connectivity constraints that make SLMs the right architectural choice. Combined with their AI-assisted development methodology using Cursor, Claude, and GitHub Copilot, Softlabs delivers model fine-tuning and integration cycles significantly faster than conventional approaches.

Contact: business@softlabsgroup.com | +91 7021649439

Explore Our AI Development Capabilities →2. SunTec India

★ Verified ListingSunTec India’s LLM fine-tuning practice explicitly covers knowledge distillation – the process of transferring capability from a large teacher model like GPT-4 into a smaller, faster student model such as Phi-3 – to produce compact models with near-full-LLM performance. This is one of the primary techniques used in small language model development, and SunTec names it as a core service offering. Their fine-tuning work spans open-source architectures including LLaMA, Mistral, Falcon, and Qwen, covering task-based, instruction-based, domain-based, and preference-based fine-tuning approaches.

The Delhi-based firm operates as a service company, meaning clients commission model work rather than receiving off-the-shelf products. Their approach to model compression is methodical – beginning with architecture selection and dataset curation, moving through distillation or direct fine-tuning, and delivering models adapted to the client’s domain and deployment target. For businesses that need a compact, efficient model trained on proprietary data without the infrastructure overhead of general-purpose LLMs, SunTec India offers a structured service pathway.

3. Persistent Systems

★ Verified ListingPersistent Systems launched GenAI Hub, an enterprise platform that includes Custom Model Pipelines – components that facilitate the creation and integration of bespoke LLMs and Small Language Models (SLMs) into the GenAI ecosystem, covering data preparation and model fine-tuning for both cloud and on-premises deployments. This is an explicitly named SLM capability within a production platform used by enterprise clients. Their NVIDIA partnership provides access to NeMo framework tooling for model customization and edge-compatible deployment.

As a publicly listed firm (NSE/BSE: PERSISTENT) with over 23,800 employees across 21 countries, Persistent Systems brings enterprise-scale delivery capacity to SLM projects. Their GenAI Hub also includes a Gateway that routes across LLMs and an Evaluation Framework using an “AI to validate AI” approach – both relevant to production SLM deployments where performance validation and model switching are operational requirements. For large Indian enterprises with complex infrastructure and compliance requirements, Persistent offers a structured, enterprise-grade pathway into small language model development.

Quick Reference: Small Language Model Development Providers by Specialisation

Softlabs Group

Location: Mumbai, Maharashtra

Key Specialty: Custom SLM architecture, IoT edge AI integration, and on-device model deployment for resource-constrained environments

SunTec India

Location: New Delhi, Delhi

Key Specialty: Knowledge distillation services producing compact, near-LLM-performance models from teacher models like GPT-4

Persistent Systems

Location: Pune, Maharashtra

Key Specialty: Enterprise SLM creation and deployment via GenAI Hub Custom Model Pipelines, including on-premises deployment

Ready to discuss your small language model development requirements with our team?

Talk to Softlabs GroupHow Do You Verify a Company’s Small Language Model Development Capabilities?

Evaluate companies based on documented evidence of SLM-specific work – not general AI or LLM integration claims. Small language model development requires distinct skills: model compression, architecture selection for low-parameter targets, fine-tuning on domain-specific datasets, and deployment on edge or constrained hardware. Generic AI vendors often lack these. The top small language model development companies in India distinguish themselves through verifiable proof of this work, not marketing copy.

The companies listed above were verified through rigorous multi-source validation across five criteria:

SLM-Specific Capability Confirmation: Each company must explicitly reference small language models, model distillation, on-device inference, or edge AI language model deployment – not just “AI development” or “LLM integration.” We confirmed the distinction.

Live Proof Link Validation: Every proof link was manually tested. No dead URLs, no homepage redirects. SunTec India’s LLM fine-tuning page and Persistent Systems’ GenAI Hub press release both load and contain the claimed SLM-specific content.

Geographic HQ Confirmation: India headquarters verified via company websites, MCA registrations, and LinkedIn – not satellite offices or “founded by Indians” claims.

Technical Stack Assessment: For small language model development specifically, we verified that companies reference relevant frameworks and techniques – PyTorch, TensorFlow, NVIDIA NeMo, LangChain, knowledge distillation, LoRA fine-tuning, model quantization – rather than generic buzzwords.

Deployment Context Awareness: SLM development is only half the work. The other half is deploying on edge devices, IoT hardware, or on-premises infrastructure. We assessed whether companies demonstrate experience with the environments where SLMs actually operate.

Questions to ask any small language model development company during vendor evaluation:

- Can you demonstrate a working SLM deployment – ideally on-device or on-premises – not just a fine-tuned API wrapper?

- Which compression techniques do you use – quantization (INT4/INT8), pruning, or knowledge distillation – and for what target hardware?

- What is your smallest successfully deployed model in terms of parameter count, and what inference latency did it achieve?

- How do you handle domain-specific dataset curation for fine-tuning, and what data privacy controls exist during that process?

- Do you support DPDP-compliant on-premises deployment, or does the model training pipeline require third-party cloud access?

What’s Happening in Small Language Model Development Right Now?

Small language model development has moved from experimental territory to mainstream enterprise strategy, with India emerging as both a consumer and builder of this technology.

The India AI Impact Summit 2026, held at Bharat Mandapam in New Delhi in February, placed SLMs at the centre of India’s national AI strategy. The Indian government has approved 12 organisations to build indigenous LLMs and SLMs – including multilingual compact models targeting all 22 scheduled Indian languages. India’s policy white paper explicitly backed SLMs as the practical AI deployment layer for the country’s infrastructure and connectivity realities. This policy momentum is translating into enterprise procurement: regulated sectors including BFSI, healthcare, and government services are prioritising on-device and on-premises models over cloud API dependency.

On the technical side, Microsoft’s Phi-4 series – released in early 2025 with Phi-4-mini-instruct and Phi-4-multimodal – expanded SLM capability significantly, with enhanced reasoning and multilingual understanding at a fraction of the compute of larger models. The MarketsandMarkets SLM market report projects the sector will grow at a CAGR of 28.7% through 2032, with edge device deployment as the fastest-growing segment. Asia Pacific, driven by India, China, and Japan, is expected to deliver the highest regional growth rate. For best-in-class leading agentic AI development companies in India, SLMs are increasingly the model layer of choice for autonomous agents running in offline or latency-sensitive environments.

What Should You Expect During Small Language Model Development Implementation?

Implementation of a custom small language model typically spans 8-16 weeks, depending on whether you start with fine-tuning an existing base model or require a purpose-built compact architecture from scratch.

Discovery and Architecture Selection (2-3 weeks): The team evaluates your use case, target hardware, latency requirements, and data availability. This phase determines the base model (Phi-3, Mistral 7B, Llama 3.2, or smaller) and compression strategy – whether knowledge distillation, LoRA fine-tuning, quantization, or a combination.

Dataset Preparation and Fine-Tuning (3-6 weeks): Domain-specific fine-tuning requires curated, clean training data. This phase often takes longer than clients expect – data quality directly determines model quality. Experienced small language model development companies bring data pipeline tooling to accelerate this step.

Quantization and Optimisation (1-2 weeks): Post-training, the model is compressed for target hardware using INT4 or INT8 quantization. Performance benchmarks are run on the actual deployment device to confirm latency targets are met.

Integration and Testing (2-3 weeks): The model is integrated into your application or embedded system. Edge deployment uses frameworks like Ollama or ONNX Runtime. This phase includes inference testing, failure mode analysis, and guardrail implementation.

Common challenges include data scarcity for niche domains (addressed through synthetic data generation), model accuracy trade-offs from aggressive quantization (managed through careful precision calibration), and hardware variation across edge device fleet. Indian businesses deploying SLMs in regulated industries should plan for compliance validation – particularly under DPDP – as a distinct phase of testing.

What Influences Small Language Model Development Costs in India?

Small language model development costs in India depend primarily on whether you’re fine-tuning an existing open-source base model or commissioning a purpose-built compact architecture from scratch.

Base model strategy: Fine-tuning Phi-3, Mistral 7B, or Llama 3.2 on domain-specific data is substantially more cost-efficient than training from scratch. Most enterprise SLM projects in India begin with fine-tuning, reducing both time and compute investment significantly.

Dataset size and quality: Proprietary dataset curation is typically the largest cost variable. If your organisation has structured, clean domain data, this phase accelerates. If not, synthetic data generation or expert annotation adds effort and cost.

Target hardware and compression depth: Deploying on a high-end edge server requires less aggressive quantization than deploying on a Raspberry Pi or smartphone. More aggressive compression requires more calibration cycles to maintain accuracy.

Integration complexity: Embedding a fine-tuned SLM into an existing IoT platform or enterprise application introduces integration engineering effort that varies with system complexity.

India’s development cost advantage is real and material for SLM projects. The same fine-tuning and quantization work that costs significantly more with US or EU firms is available from experienced Indian teams at competitive rates – without compromising on the PyTorch, NVIDIA NeMo, or LangChain expertise the work requires. Indian small language model development companies also benefit from a large pool of ML engineers familiar with both open-source model ecosystems and enterprise deployment constraints. Plan budget across three phases: data preparation, model development, and integration. Get detailed scope definitions before project start – ambiguous requirements are the primary driver of cost overruns in SLM development.

Frequently Asked Questions About Small Language Model Development in India

What is a small language model and how is it different from an LLM?

A small language model (SLM) is a compact AI language model with typically 1-13 billion parameters, designed to run efficiently on edge devices, smartphones, IoT hardware, or on-premises servers without cloud dependency. Large language models like GPT-4 contain hundreds of billions of parameters and require significant cloud infrastructure to serve. SLMs achieve comparable performance on specific, well-defined tasks through training on curated domain data and compression techniques like knowledge distillation, model pruning, and quantization. The trade-off is scope: an SLM excels at its target task but cannot generalise across arbitrary domains the way a frontier LLM can.

Which companies in India can build and deploy small language models for edge devices?

The verified small language model development companies in India listed above – Softlabs Group, SunTec India, and Persistent Systems – each demonstrate documented SLM-specific capability. Softlabs Group brings IoT edge deployment experience alongside custom AI development. SunTec India explicitly offers knowledge distillation services targeting compact student models like Phi-3. Persistent Systems offers SLM creation through its GenAI Hub Custom Model Pipelines with on-premises deployment support. When evaluating, look for companies that reference specific compression techniques and target hardware, not just general “AI development.”

How do small language model development companies in India approach model compression?

Indian SLM development firms typically use three primary compression techniques, often in combination. Knowledge distillation trains a smaller student model to replicate the outputs of a larger teacher model – preserving 90-95% of capability at 10% of the parameters. Model quantization reduces numerical precision from 32-bit floats to INT8 or INT4, cutting memory footprint and accelerating inference on edge hardware. Pruning removes less important model weights to reduce size further. Most production projects combine fine-tuning on domain-specific data with quantization for target hardware, validated through inference benchmarks on the actual deployment device.

Can a small language model run offline on IoT devices?

Yes. On-device offline inference is one of the primary use cases for SLMs. Models like Phi-3-mini and Llama 3.2-1B, quantized to 4-bit precision, can run on hardware as modest as Raspberry Pi 5 or NVIDIA Jetson Nano – without any internet connection. This makes them well-suited for manufacturing shop floors with poor connectivity, rural healthcare deployments, field operations, and embedded systems where data privacy requires that no information leave the device. The inference speed depends on hardware and model size: sub-10ms latency is achievable for task-specific SLMs on edge server-class hardware.

What frameworks do Indian companies use to build small language models?

The standard stack for small language model development in India includes PyTorch for model training and fine-tuning, with Hugging Face Transformers providing access to open-source base models. NVIDIA NeMo is used for model customization and enterprise-grade deployment pipelines. For parameter-efficient fine-tuning, LoRA (Low-Rank Adaptation) is widely used to minimise compute requirements. Deployment frameworks include Ollama for local inference and ONNX Runtime for cross-platform edge deployment. LangChain and LlamaIndex are used when building RAG-augmented SLM applications that combine on-device models with retrieved context.

How long does SLM fine-tuning take with an Indian development partner?

A fine-tuning engagement with an experienced small language model development company in India typically spans 3-6 weeks for the model development phase alone, not including data preparation or integration. The largest time variable is dataset curation – if your organisation has clean, structured domain data ready, fine-tuning can proceed faster. If data needs to be collected, cleaned, or synthetically augmented, add 2-4 weeks. Full project timelines from kickoff to deployed, integrated SLM typically run 8-16 weeks depending on target hardware, integration complexity, and compliance requirements.

How much does small language model development cost in India?

Small language model development costs in India vary with project scope. The best small language model development companies in India offer significant cost advantages over US or EU alternatives for equivalent ML expertise in PyTorch, NeMo, and LoRA fine-tuning. Fine-tuning an existing open-source model like Mistral 7B or Phi-3 on domain data is considerably more cost-efficient than training from scratch. The main cost drivers are dataset preparation, the number of fine-tuning iterations required to hit accuracy targets, and integration engineering for the deployment environment. Firms that use AI-assisted development tools can further compress delivery timelines, reducing overall project cost without compromising model quality.

Conclusion: Choosing the Right Small Language Model Development Partner in India

The leading small language model development companies in India on this list represent verified providers with documented SLM-specific capabilities – from knowledge distillation and open-source fine-tuning to enterprise platform-level SLM creation and IoT edge deployment. Each was included because they explicitly reference the techniques and deployment contexts that distinguish genuine SLM work from generic AI integration.

India’s position in this market is strengthening rapidly. Government-backed indigenous SLM initiatives, the DPDP Act driving demand for on-premises AI, and the country’s deep pool of ML engineering talent are converging to make India a credible centre for small language model development – not just consumption. Organisations that act now to develop domain-specific SLMs for their proprietary data will hold a structural advantage as inference costs and edge hardware continue to improve.

The companies above represent India’s proven expertise across the SLM development stack. Whether your requirement is a compact model for offline field operations, an IoT-embedded inference system, or a DPDP-compliant on-premises AI assistant, partnering with a specialist who understands both the model engineering and the deployment environment is what separates successful SLM projects from expensive fine-tuning exercises.

Build Your Small Language Model Solution with Softlabs Group

Softlabs Group specialises in custom small language model development tailored to your business requirements, target hardware, and integration architecture. The team combines 22+ years of enterprise AI and IoT development experience with expertise in PyTorch, LangChain, and edge deployment frameworks to deliver production-ready SLMs for on-device, offline, and resource-constrained environments.

Whether you need a domain-specific SLM fine-tuned on proprietary data or an end-to-end edge AI system integrating a compact model with IoT infrastructure, the AI-assisted development approach delivers quality solutions 2-3x faster than conventional methods.