Your teams have answers somewhere. They live in a Confluence page from six months ago, a SharePoint folder three levels deep, or an email thread nobody tagged. Employees spend hours each week hunting for information that already exists – just not where they can find it quickly. An enterprise knowledge base system built on a large language model changes that: your staff asks a question in plain English and gets a cited, precise answer pulled from your actual internal documents. Enterprise knowledge base LLM development companies in India are seeing strong demand as organisations across BFSI, healthcare, manufacturing, and logistics replace keyword-based search with AI-driven knowledge retrieval. According to research published by McKinsey, generative AI could add $2.6 trillion to $4.4 trillion in annual value to the global economy, with knowledge management among its four highest-impact use cases. The eight enterprise knowledge base LLM development companies in India below represent verified providers with explicit RAG system expertise, documented deployment capability, and confirmed India headquarters.

Each company on this list was evaluated for specific enterprise knowledge base LLM capabilities on their service pages – not generic AI claims. Softlabs Group leads with 22+ years of enterprise system development, a dedicated enterprise knowledge base LLM solution, and AInfiniteCore, a purpose-built enterprise knowledge base product developed through their AI venture Ainfinite AI.

Quick Navigation

What Makes Enterprise Knowledge Base LLM Important for Indian Businesses?

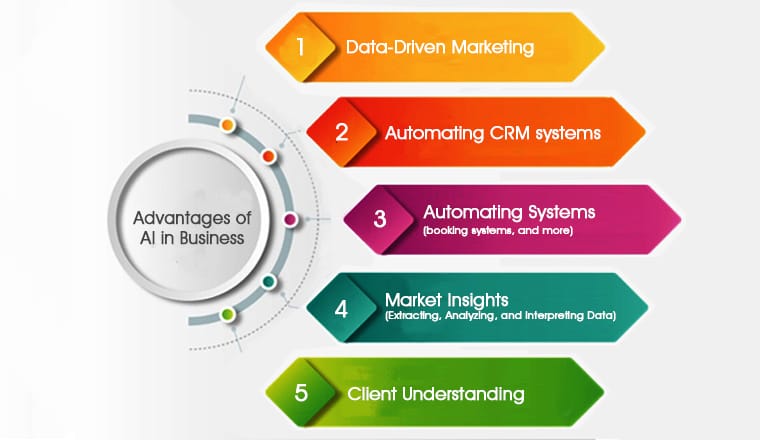

Enterprise knowledge base LLM development gives Indian enterprises measurable operational gains by replacing manual, siloed information retrieval with AI-driven systems that answer employee queries in seconds – and cite the source documents they drew from.

Most mid-to-large Indian organisations manage knowledge across 10 or more tools simultaneously: Confluence, SharePoint, ticketing systems, legacy ERPs, and email archives. Each operates as its own silo with its own search logic. A compliance executive at an NBFC needs to cross-reference three policy documents to answer a client query. A procurement officer at a manufacturer needs to locate an SOP buried four folders deep before approving a release. The time cost accumulates into thousands of dollars per employee annually in lost productivity – and the less visible damage is decisions made on incomplete information.

Beyond time, there is a knowledge retention risk. When experienced staff leave, their institutional expertise often goes with them. An AI powered knowledge base development system that continuously ingests updated documents, SOPs, and internal data ensures that expertise stays accessible regardless of headcount changes. The system preserves organisational memory in a form that every authorised employee can query.

India’s BFSI sector faces additional pressure from the Reserve Bank of India’s evolving AI governance guidance, which requires financial institutions to keep sensitive customer data within controlled environments. This is accelerating demand for private knowledge base deployments – on-premise or private cloud systems where queries against internal documents never leave the institution’s infrastructure. RAG as a service companies in India have responded by building India-specific private deployment frameworks that align with RBI and IRDAI requirements.

Which Companies in India Build Enterprise Knowledge Base LLM Solutions?

The eight enterprise knowledge base LLM development companies in India below have been verified through multi-source validation: LinkedIn headcount confirmation, live proof link verification, topic-specific capability assessment, and geographic HQ confirmation.

1. Softlabs Group

★ Verified ListingCore Expertise in Enterprise Knowledge Base LLM: Softlabs Group develops custom enterprise knowledge base LLM systems that connect internal documents – policies, SOPs, manuals, contracts, and compliance materials – to a RAG-powered AI interface. Employees query company knowledge through natural language and receive cited, permission-aware answers drawn from the connected document store. Systems support integration with Confluence, SharePoint, Notion, and proprietary internal tools, with role-based access control enforcing document-level permissions across user groups.

Softlabs Group’s capability in this domain is demonstrated through two distinct offerings. The dedicated enterprise knowledge base LLM solution architecture delivers the full system – document ingestion pipeline, vector embedding, hybrid search, LLM orchestration, and RBAC – built to the client’s infrastructure requirements and data classification rules. Separately, their AI venture Ainfinite AI has built AInfiniteCore, a commercial enterprise knowledge base product that clients can deploy without starting from scratch. With 22+ years of enterprise system integration across fintech, healthcare, manufacturing, and logistics – and production deployments for clients including Nippon India Mutual Fund, Afcons, MYFI Australia, and FPMcCann UK – the team brings institutional context that pure AI startups cannot replicate.

Contact: business@softlabsgroup.com | +91 7021649439

View Our Enterprise Knowledge Base LLM Solution →2. Cymetrix Software

★ Verified ListingCymetrix Software holds the strongest documented case study on this list for enterprise knowledge base LLM work. The company built a RAG-based enterprise chatbot that accessed internal knowledge base data across HR policies, IT guidelines, compliance documents, and FAQs. The system was constructed using LangChain for LLM orchestration, Google Vertex AI for the language model layer, BigQuery for structured data retrieval, and vector embeddings for semantic search – with role-based access controls ensuring employees received answers only from document sets they were already permitted to view.

Beyond this deployment, Cymetrix provides AI and ML development, Generative AI solutions, and AI agent consulting as core services. Their background as a Salesforce consulting partner gives them integration depth in enterprise CRM environments – directly relevant when building knowledge bases that must connect to sales and service data stores. Clients served include IQVIA, Tata Steel, and GE Money across BFSI, healthcare, and manufacturing sectors.

3. Kanhasoft

★ Verified ListingKanhasoft has a dedicated AI-Enabled Knowledge Base service page – a specific capability page, not a generic AI services listing. Their documented case studies include a multilingual knowledge base for a German insurance company that delivered a 55% reduction in query handling time, a healthcare compliance knowledge base with intent detection and contextual response, and a SaaS in-app knowledge system. Each case demonstrates their core approach: NLP-powered search with intent understanding, self-learning AI that improves from user interactions, and enterprise-grade security for sensitive document stores.

Founded in 2013, Kanhasoft builds custom software, SaaS applications, and AI/ML solutions for clients across the USA, Europe, and the Middle East. Their knowledge base implementations support multiple languages – a differentiator for Indian enterprises with multilingual employee bases or international operations that need a knowledge system to serve users across language boundaries without building separate instances per language.

4. Openxcell

★ Verified ListingOpenxcell’s LLM Development service page states their RAG-powered solutions draw on internal knowledge sources – FAQs, PDFs, and document repositories – to build context-aware enterprise systems. The company supports GPT, LLaMA, and Claude as underlying models and offers enterprise-grade deployment including on-premises options for organisations where data sovereignty requirements prevent cloud-based LLM processing. Their AI observability capability is a differentiator: they instrument knowledge base systems to track answer quality, retrieval accuracy, and system performance after launch – not just during delivery.

Founded in 2009 and CMMI Level 3 certified, Openxcell is one of the most established AI development companies on this list. A team of 201-500 professionals provides the capacity for large-scale enterprise knowledge base implementations requiring dedicated project teams, data engineering resources, and long-term maintenance commitments. AWS partner status is relevant for cloud-based private RAG deployments on AWS infrastructure.

5. DevsTree IT Services Pvt. Ltd.

★ Verified ListingDevsTree has a dedicated RAG & LLM Development page covering enterprise knowledge base integration, vector database configuration, and LLM orchestration across GPT, Gemini, Claude, LLaMA, Mistral, and Falcon. This multi-model support is practical for regulated enterprise environments where model selection depends on data classification requirements – some document stores may be suitable for cloud LLMs while others require on-premise Mistral or LLaMA deployments. Their secure on-premises and private cloud deployment capability explicitly targets finance, healthcare, and legal sector clients operating under strict data governance requirements.

ISO 9001:2008 certified and founded in 2013, DevsTree also offers Intelligent Document Processing and Agentic Workflow Automation as adjacent services. This combination matters for enterprises whose knowledge base needs more than static retrieval – documents must be automatically ingested, classified, and re-indexed as they are created or updated. Pairing RAG retrieval with document automation turns a static knowledge base into a self-maintaining one.

6. TOPS Infosolutions Pvt. Ltd.

★ Verified ListingTOPS Infosolutions offers RAG as a Service with a dedicated page that specifically addresses enterprise knowledge base use cases. Their documentation explains how organisations transform internal documents, emails, databases, and other datasets into an interactive chat experience – and distinguishes between two RAG model types. Active RAG retrieves from live data sources in real time, which is essential for frequently updated policy documents and product information. Passive RAG draws from pre-compiled stores that are periodically refreshed, which is more efficient for stable reference libraries and technical documentation.

With a team of 201-500 and a service portfolio that extends to Agentic AI Systems, TOPS Infosolutions can build knowledge base implementations that go beyond static retrieval – incorporating agents that act on retrieved information, route queries, or escalate to human operators when retrieval confidence is low. Their scale and track record in custom enterprise software give them the depth for long-term maintenance commitments that production knowledge base systems require.

7. Signity Solutions

★ Verified ListingSignity Solutions offers Custom RAG Development as a dedicated service and has published detailed technical content on enterprise RAG security – covering vector database vulnerabilities, retrieval-stage access controls, query validation, and regulatory compliance within RAG pipelines. This security focus is directly relevant for enterprise knowledge base projects where the document store contains sensitive HR, legal, or financial content that must not surface across permission boundaries. Their service pages describe building AI systems that connect to internal document repositories and enterprise data to provide context-aware responses to authorised users.

Founded in 2009 with over 1,000 delivered projects and an 80% repeat client rate, Signity Solutions demonstrates adherence to GDPR, HIPAA, SOC 2, and ISO/IEC 27001 compliance frameworks – a meaningful differentiator for Indian enterprises building knowledge bases that store regulated data. Their Agentic AI services extend the capability beyond retrieval to post-query workflows where agents act on retrieved information, route requests, or chain multiple knowledge lookups before generating a final response.

8. Prismetric Technologies Pvt. Ltd.

★ Verified ListingPrismetric Technologies lists RAG as a core architecture within their LLM Development Services – positioned alongside custom LLM fine-tuning for domain-specific knowledge retrieval. Their service documentation explicitly covers domain-specific knowledge scenarios: building systems where the LLM answers only from retrieved enterprise content rather than from general training knowledge. This retrieval constraint is what prevents hallucinated answers in production enterprise knowledge base deployments, and Prismetric’s focus on it indicates practical deployment understanding beyond surface-level AI marketing.

ISO 9001:2015 certified and founded in 2008, Prismetric brings 14+ years of enterprise software development to knowledge base implementations. Their Gandhinagar location in the Infocity technology zone places them in one of Gujarat’s established IT clusters. Document Automation and AI Agent Development as adjacent services support use cases where knowledge base retrieval feeds into automated decision-making or workflow execution – extending the knowledge base from a query tool into an active enterprise system component.

Quick Reference: Enterprise Knowledge Base LLM Development Companies in India by Specialization

Softlabs Group

Location: Lower Parel West, Mumbai

Key Specialty: Custom enterprise knowledge base LLM systems + AInfiniteCore commercial KB product; private LLM deployment for regulated sectors

Cymetrix Software

Location: Andheri East, Mumbai

Key Specialty: RAG + Vertex AI enterprise chatbot with verified RBAC case study; Salesforce and CRM data integration

Kanhasoft

Location: Ahmedabad, Gujarat

Key Specialty: Multilingual AI-enabled knowledge bases with self-learning NLP; healthcare and insurance sector case studies

Openxcell

Location: Ahmedabad, Gujarat

Key Specialty: Enterprise-grade on-premise LLM deployment; AI observability monitoring for knowledge base performance post-launch

DevsTree IT Services

Location: Ahmedabad, Gujarat

Key Specialty: Multi-model LLM orchestration across 6 models; private cloud deployment for finance, healthcare, and legal sectors

TOPS Infosolutions

Location: Ahmedabad, Gujarat

Key Specialty: RAG as a Service with active and passive retrieval models; agentic AI integration for post-retrieval workflows

Signity Solutions

Location: Mohali, Punjab

Key Specialty: Custom RAG development with enterprise security focus; GDPR, HIPAA, SOC 2, and ISO/IEC 27001 compliance

Prismetric Technologies

Location: Gandhinagar, Gujarat

Key Specialty: RAG with domain-specific fine-tuning; document automation integrated with knowledge retrieval systems

Ready to discuss your enterprise knowledge base LLM requirements with our team?

Talk to Softlabs GroupHow Do You Verify a Company’s Enterprise Knowledge Base LLM Development Capabilities?

Evaluate enterprise knowledge base LLM development companies in India based on documented RAG system deployments, specific framework expertise, and verifiable access control implementation – not website capability claims alone.

The companies on this list were verified through a five-point process. First, each company must explicitly mention enterprise knowledge base LLM systems, RAG pipeline architecture, or internal knowledge management AI on their service pages. Second, every proof link was manually tested – it loads, contains knowledge base or RAG-specific content, and is not a homepage redirect. Third, India headquarters was confirmed through company websites, LinkedIn, and MCA records. Fourth, team size was taken from LinkedIn company pages only, with no estimates or third-party guesses. Fifth, for a technical topic like enterprise knowledge base LLM development, companies were assessed on whether they name specific frameworks – LangChain, LlamaIndex, Vertex AI, vector databases – rather than just “AI services.”

Multiple companies that appeared in competitor listicles for this topic failed one or more of these criteria and were excluded. This process ensures you are evaluating providers with genuine AI powered knowledge base development capability, not generic vendors claiming every AI specialty. The leading enterprise knowledge base LLM development companies in India will demonstrate their capability through specific deployment evidence before you commit to a contract.

Questions to Ask Vendors When Evaluating:

- Can you show us a live enterprise knowledge base system or a detailed case study – specifically demonstrating how employees query documents and how access controls function?

- Which vector database do you use and why? What were the retrieval latency benchmarks in your most recent enterprise deployment?

- How does your system handle document updates and re-indexing so the knowledge base stays current without requiring manual intervention from IT teams?

- Does your implementation support hybrid search (vector semantic search combined with keyword matching), or semantic search alone? How does this choice affect retrieval accuracy for specific query patterns your business uses?

- For regulated data, do you offer on-premise or private cloud deployment where the language model processes queries entirely within our infrastructure?

- What does your post-deployment evaluation process look like? How do you measure and improve answer quality, retrieval accuracy, and hallucination rate after launch?

What’s Happening in Enterprise Knowledge Base LLM Development Right Now?

Enterprise knowledge base LLM development has moved beyond the original vanilla RAG architecture, with GraphRAG, Agentic RAG, and hybrid search becoming standard considerations in production enterprise deployments.

The most significant shift in the past 12-18 months is from proof-of-concept deployments to production systems with measurable accountability. Early enterprise knowledge base projects in India focused on demonstrating that an LLM could answer from internal documents at all. Current deployments are evaluated on retrieval accuracy, response latency, hallucination rate, and access control precision – the operational metrics of a system that employees actually depend on. This shift selects for vendors who can instrument, monitor, and improve their systems after launch, not just deliver them.

RAG architecture itself has evolved. Vanilla RAG – vector search plus LLM generation – handles stable document collections and straightforward queries well. Private LLM development has accelerated particularly for BFSI and healthcare clients where regulatory requirements demand on-premise processing and data sovereignty. GraphRAG connects retrieved documents through relationship graphs, enabling multi-hop reasoning across related knowledge – useful for compliance frameworks where policy documents reference each other in chains. Agentic RAG gives the retrieval layer agency: the system decides which document sources to query, whether to chain multiple retrievals, and how to handle low-confidence responses.

In India specifically, the Digital Personal Data Protection Act has driven enterprise buyers toward private enterprise knowledge base LLM deployments where personal data referenced in internal documents stays within controlled infrastructure. BFSI, insurance, and healthcare enterprises are actively replacing cloud-based knowledge tools with custom-built systems where they own the entire stack – model, vector index, and query pipeline. The companies on this list that explicitly offer on-premise or private cloud options are best positioned for this segment of demand.

What Should You Expect During Enterprise Knowledge Base LLM Implementation?

Enterprise knowledge base LLM implementation typically requires 3-5 months for a production-ready system, with data preparation accounting for a larger share of that timeline than most organisations initially anticipate.

Phase Breakdown:

- Discovery & Architecture (2-4 weeks): Document inventory, source system identification, access control mapping, and selection of LLM model, vector database, and deployment environment. This phase determines whether the system runs on cloud, private cloud, or on-premise infrastructure.

- Data Preparation & Ingestion Pipeline (4-8 weeks): Document cleaning, format standardisation, chunking strategy design, and vector embedding generation. Data preparation typically accounts for 20-40% of total project time. Documents accumulated over years contain inconsistencies, duplicates, and outdated information that the knowledge base will surface faithfully unless addressed.

- RAG System Build (4-6 weeks): Vector database configuration, retrieval pipeline construction, LLM orchestration layer, RBAC integration, and hybrid search implementation (vector plus keyword).

- Testing, Evaluation & Refinement (2-4 weeks): Answer quality benchmarking, retrieval latency testing, access control verification, and user acceptance testing with real employee queries across representative document types.

- Deployment & Monitoring Setup (1-2 weeks): Production deployment, user onboarding, and performance monitoring instrumentation for ongoing quality tracking.

Access control complexity increases with organisation size. A system serving 50 employees with simple role categories is straightforward to configure. A system serving 5,000 employees across business units, geographies, and document classification levels requires careful permission architecture from day one. Scoping this explicitly before development begins prevents the most common source of project expansion mid-delivery.

Organisations that start with a focused pilot – one department’s document set or a specific internal knowledge domain – consistently reach measurable value faster than those attempting enterprise-wide deployment from launch. A successful pilot also builds organisational confidence in the system before broader rollout.

What Influences Enterprise Knowledge Base LLM Development Costs in India?

Enterprise knowledge base LLM development costs in India depend on deployment model, document volume, integration complexity, and access control requirements – with Indian development pricing offering a meaningful cost advantage over equivalent capability in Europe or the US.

Key Cost Factors:

- Deployment model: Cloud-based RAG systems on AWS or GCP cost less to build than on-premise private LLM deployments, which require additional infrastructure configuration, model quantisation, and hardware-specific optimisation. Organisations in regulated sectors requiring on-premise processing should factor this into project scoping from the start.

- Document volume and data preparation: Clean, consistently formatted document collections require less preparation effort than legacy archives with mixed formats, scanned PDFs, and multilingual content. Data preparation scope is the most variable cost factor across enterprise knowledge base projects.

- Integration complexity: A standalone knowledge base with a single document source costs significantly less than a system integrating Confluence, SharePoint, a proprietary ticketing system, and an ERP with real-time data retrieval.

- Access control architecture: Simple role-based access is straightforward to implement. Document-level or row-level security across a multi-permission, multi-department environment adds meaningful engineering complexity and testing scope.

- LLM selection: Cloud LLMs (GPT-4o, Claude, Gemini) carry ongoing API costs added to base infrastructure. Open-source deployments (LLaMA, Mistral) carry higher upfront infrastructure cost but lower operational cost for high-query-volume systems – often breaking even within 12-18 months of production use.

Request detailed proposals from multiple companies on this list using the same scope document. Provide your document inventory, access control requirements, and integration map to enable accurate comparison. Companies experienced in enterprise knowledge base LLM development will separate data preparation costs from system build costs in their proposals. An unusually low headline price frequently means data preparation has been excluded from scope.

Frequently Asked Questions About Enterprise Knowledge Base LLM Development Companies in India

How does an enterprise knowledge base LLM system work for internal teams?

An enterprise knowledge base LLM system works by connecting a language model to your internal documents through a retrieval layer. When an employee asks a question, the system converts that query into a mathematical vector representation, searches the indexed document store for semantically relevant sections, retrieves the most relevant content, and feeds it to the LLM as context. The model then generates a direct answer in plain English, citing the source documents used. The model answers only from what was retrieved – not from general training knowledge – which prevents hallucinated or outdated responses. Role-based access controls ensure employees only receive answers from document sets they are already permitted to view, maintaining the same permission boundaries that govern direct document access.

What is the difference between RAG and fine-tuning for enterprise knowledge bases?

Retrieval-Augmented Generation (RAG) keeps the base language model unchanged and retrieves relevant documents at query time to provide context for each response. Fine-tuning trains the model on your data so it internalises domain vocabulary, output format, and task behaviour. For enterprise knowledge bases, RAG is the standard starting point because it handles dynamic, frequently updated document collections without retraining – critical when policies and SOPs change regularly. Fine-tuning works better when consistent behaviour is required regardless of what is retrieved, such as a clinical documentation system that must always output in a specific medical format. Most production enterprise knowledge base systems use RAG as the primary architecture, with fine-tuning added selectively for specialised domains where retrieval alone does not produce sufficiently consistent outputs.

How do enterprise knowledge base LLM development companies in India ensure sensitive data stays private?

The strongest implementations run the language model on the organisation’s own infrastructure or a private cloud environment – so query content, retrieved document sections, and generated responses never touch a shared external server. Both the vector index and the LLM inference layer remain within the organisation’s controlled network. For less sensitive deployments, private cloud configurations on AWS or Azure Virtual Private Clouds provide isolation without full on-premise hardware requirements. Within the system, RBAC enforces document-level permissions so the retrieval layer only surfaces content the querying user is authorised to access. Indian enterprises in BFSI, healthcare, and government are increasingly specifying on-premise deployment as a procurement requirement under RBI AI governance guidance and the DPDP Act.

Which frameworks are used to build enterprise knowledge base LLM systems?

The most widely used frameworks among enterprise knowledge base LLM development companies in India are LangChain and LlamaIndex for LLM orchestration and RAG pipeline construction. Vector databases include Pinecone, Chroma, Weaviate, Qdrant, and Milvus – selected based on document scale, query latency requirements, and deployment environment. LLM options span proprietary models (GPT-4o, Claude, Gemini) for cloud deployments and open-source models (LLaMA, Mistral, Falcon) for on-premise or air-gapped requirements. Most production systems use hybrid search – combining vector semantic search with keyword-based retrieval and a reranking layer – rather than semantic search alone, which improves retrieval accuracy for both conceptual queries and exact-match lookups for specific terms, product names, or policy identifiers.

How long does it take to build an enterprise knowledge base with LLM in India?

A production-ready enterprise knowledge base LLM system typically takes 3-5 months to build and deploy. Discovery and architecture planning takes 2-4 weeks. Data preparation and ingestion pipeline construction – cleaning, formatting, chunking, and embedding existing documents – accounts for 20-40% of total project time and is consistently the longest phase. The RAG system build, access control integration, and testing phases take an additional 6-12 weeks depending on integration scope. Organisations with well-maintained, consistently formatted document collections complete projects significantly faster than those with legacy document archives that require substantial preparation work before ingestion can begin.

How does an AI knowledge base compare to SharePoint or Confluence search?

Standard SharePoint and Confluence search returns a ranked list of links to documents that may contain the answer. The employee still opens the documents, reads through them, and extracts the relevant section manually – barely faster than not searching at all. An AI powered knowledge base built on LLM technology reads the retrieved content and provides a direct, cited answer in plain English. The AI does the reading and synthesis; the employee receives the conclusion. For frequent, repetitive queries – policy questions, process clarifications, compliance checks, onboarding questions – the productivity difference is significant. For complex, novel queries requiring judgment, the AI knowledge base surfaces the relevant documents faster but human review of the source content remains valuable.

Which enterprise knowledge base LLM development companies in India offer private on-premise deployment?

Among the companies on this list, Softlabs Group, Openxcell, and DevsTree IT Services explicitly offer on-premise or private cloud LLM deployment for enterprise knowledge base systems. Signity Solutions also provides private LLM implementation as a declared service. On-premise deployment is typically required by organisations in BFSI, healthcare, defence, and government sectors where regulatory frameworks or internal data governance policies prohibit sensitive document content from being processed on third-party infrastructure. Indian enterprises subject to RBI AI governance guidelines and the Digital Personal Data Protection Act are increasingly specifying private deployment as a standard procurement requirement for any AI system that accesses internal documents.

Choosing the Right Enterprise Knowledge Base LLM Development Partner in India

The leading enterprise knowledge base LLM development companies in India on this list represent providers with specific, documented capability in RAG architecture, private LLM deployment, and enterprise knowledge retrieval – not generic AI vendors with a knowledge base checkbox on their services page. Each was verified for topic-specific proof before inclusion.

The technology is maturing fast. Vanilla RAG is being extended by GraphRAG and Agentic RAG for complex enterprise knowledge environments. The DPDP Act and RBI’s AI governance guidance are pushing regulated sectors toward private deployments that cloud-first vendors cannot support. And the evaluation benchmark has shifted from “does it work” to “what are the retrieval accuracy, latency, and hallucination metrics.” The custom LLM development companies in India on this list are positioned to answer those operational questions with evidence from real deployments.

Whether you are building an HR knowledge base for a 200-person organisation or a compliance retrieval system across 50,000 documents in a regulated financial institution, partner selection should prioritise documented deployment experience over website claims. The verification criteria used for this list – explicit service pages, live proof links, framework-specific expertise, and real access control implementation – apply equally when you evaluate vendors directly.

Build Your Enterprise Knowledge Base LLM Solution with Softlabs Group

Softlabs Group specialises in custom enterprise knowledge base LLM development tailored to your document architecture, access control requirements, and integration environment. The team combines 22+ years of enterprise system experience with expertise in LangChain, LlamaIndex, RAG pipelines, and private LLM deployment to deliver production-ready systems.

Whether you need a complete enterprise knowledge base platform from scratch, a private RAG deployment for regulated data, or want to explore AInfiniteCore – the enterprise knowledge base product from Ainfinite AI, Softlabs Group’s AI venture – our AI-assisted development approach delivers quality solutions 2-3x faster than traditional methods.