No time to read? Take a quick listen on a coffee break ! ( Choose 1.5x to increase speed )

In a world that is always changing, every day brings some latest trends in Information Technology. The year 2024 is no exception, revealing a bunch of IT trends that will change not only how businesses work, but also our daily lives.

It is crucial to stay updated with the current trends in IT industry. It is not just about staying competitive; it is about thriving in a world that is becoming more digital by the minute. Every emerging trend in information technology brings both chances and challenges that could lead to success or failure for both companies and people.

Getting to know these new trends in information technology is like having a map in this huge ever-evolving digital world. It is about being ready for what is next and navigating through the digital maze with ease. By staying informed about the latest technology trends, businesses and individuals can better prepare for future innovations and disruptions. So, let us dive in without delay

What Interesting Things Await For You in the Article?

-

New tech breakthroughs added ( June 2024 Updated )

-

Optimus robot finally working in real world

-

Simple beginner flowcharts on how the tech works

-

Special Tips for startup & businesses by ChatGPT CEO

-

Updated market size data in each trend

-

Elon musk Tesla drives on its own with neural nets

-

Sam Altman on how types of AI will change society

-

Images of Real world examples in AI/ Robotics

-

Data of Job market growth with roles available

-

Tips for how students, working & business professionals can navigate these trends.

Why You Should Read Our Information :

-

Long-Standing Experience

Over 20 years in IT, specialising in AI services.

-

ISO Certification:

Our adherence to international standards ensures the quality of the information we provide.

-

Research-Driven Insights:

We dedicate substantial time to meticulous research, consulting multiple credible sources.

-

Technological Pioneers:

Our early adoption of emerging technologies like Blockchain, AI/ML, and IoT reflects our proactive approach to staying ahead in the IT landscape.

-

Developed AI Solutions:

Our dedicated development team has created numerous AI solutions, and the research gathered from these projects has significantly influenced our articles with valuable insights

Here are the Latest Trends in Information Technology for 2024 – 2030 (Updated)

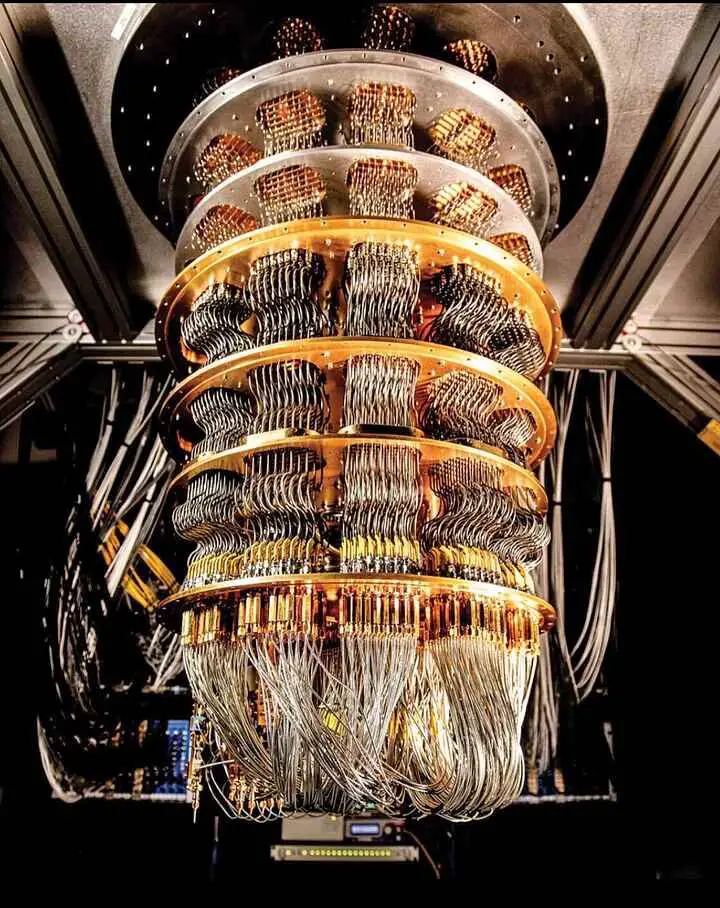

Trend 1: Quantum Computing

Image Source: Google Quantum Computer

The start of Quantum Computing is a big deal in the world of computers we are living in. Unlike regular computers that use ‘bits’ and only understand a language of 0s and 1s, quantum computers use something called ‘qubits’. According to IBM, a qubit is cool because it can be a 0, a 1, or both at the same time. This opens a whole new world of what computers can do, making things possible that we once thought were impossible.

Careers in Quantum Computing :

In 2024, one of the current trends in IT industry is quantum computing, which are notably manifesting in areas of :

- Cryptography

- Complex system simulation

- Optimization problems

For example, there’s this thing called Shor’s Algorithm, which is a quantum algorithm studied by researchers at MIT. This method is a big deal because it can solve certain math problems way faster than our usual computers can. If it works out, our current online security systems, like the RSA encryption, could become outdated because they rely on math problems that are being hard to solve quickly.

Furthermore, quantum computing is emerging as a linchpin in :

- Simulating molecular structures

- Potentially accelerating the discovery of new materials and drugs

This new tech is helpful, especially when we are trying to fix big problems like climate change and diseases, just like Nature Research mentioned.

The industry is also witnessing a proliferation of quantum alliances among :

- Academia

- Tech behemoths

- Government bodies

These team-ups, mentioned by Quantum Computing Report, are working to overcome the challenges of quantum computing like fixing mistakes and keeping qubits stable, getting us closer to mastering quantum computing.

Engagement with quantum technology requires :

- A steep learning curve

- The readiness to embrace a paradigm shift in computational thinking

For professionals and businesses, the quantum wave signifies a call to action to :

- Invest in quantum literacy

- Align strategies with quantum advancements

- Foster collaborations to explore the quantum frontier

Quantum computing is getting closer to being something we can use, and it is important for people involved to understand what this means and what could come from it. Sure, there might be some bumps along the way, but the new power of quantum computing could change the way we solve problems and come up with new ideas.

It is more than just a cool tech upgrade; it shows how curious we are and how we never stop trying to do better and reach further. As we get ready for this big change, we are looking at a future full of amazing chances to use this new computing power to tackle some of the world’s biggest issues.

Recent Updates / Breakthroughs in Quantum Computing ( 2024 Updated )

Atom-Based Quantum Computers

Researchers have advanced “quantum bit supercharging,” using over 1,000 atomic qubits, which are powerful units for quantum computers. This development improves laser performance and might lead to the use of up to 10,000 qubits, enabling faster computations for industries like healthcare, finance, and logistics as per researchers at TU Darmstadt.

Transition to Error-Corrected Logical Qubits

Moving from physical to error-corrected logical qubits has made quantum computing more stable and less prone to errors. This allows quantum processors to handle larger datasets and perform accurate calculations, revolutionizing drug discovery, climate modeling, and AI, detailed in an interview with Yuval Boger.

Hybrid Quantum-Classical Applications

Moving from physical to error-corrected logical qubits has made quantum computing more stable and less prone to errors. This allows quantum processors to handle larger datasets and perform accurate calculations, revolutionizing drug discovery, climate modeling, and AI, detailed in an interview with Yuval Boger.

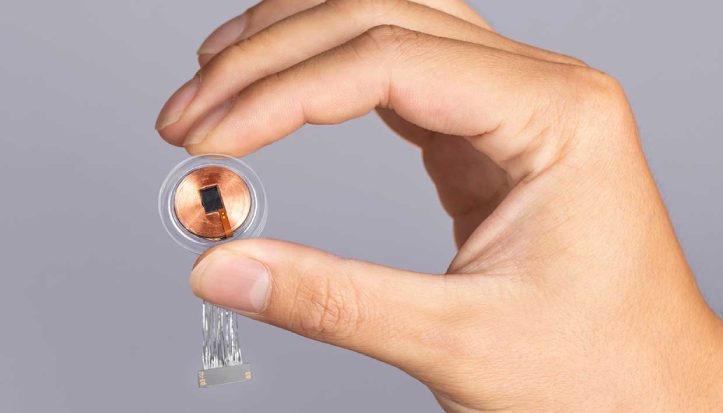

Quantum System-on-Chip

Researchers at MIT and Mitre Corporation have developed a quantum system-on-chip, improving control over large qubit arrays. This innovation addresses scalability and control issues, paving the way for practical quantum computers used in applications like encryption and scientific research.

Careers in Quantum Computing :

-

Job Growth :

The demand for quantum computing skills is rising, with a notable growth in job postings related to quantum computing roles, as reported by Indeed.

-

Roles :

Quantum Algorithm Developer, Quantum Hardware Engineer, Quantum Software Engineer are among the sought-after positions.

-

Employment Opportunities :

Companies like IBM and Google are pioneering in quantum computing, creating numerous job opportunities in this burgeoning field.

-

Technological Pioneers:

Our early adoption of emerging technologies like Blockchain, AI/ML, and IoT reflects our proactive approach to staying ahead in the IT landscape.

-

Developed AI Solutions:

Our dedicated development team has created numerous AI solutions, and the research gathered from these projects has significantly influenced our articles with valuable insights

Opinion of a Quantum Computing Expert:

Here’s Michio Kaku, a renowned theoretical physicist on why quantum computing is the next revolution

Trend 2 : 5G & Beyond

The journey to get faster and better internet has taken us from 4G to 5G. Now, everyone is already looking at what is next after 5G.

Impact of 5G :

- The introduction of 5G – the latest generation of wireless technology – stands out in the recent trends in IT. It significantly enhances online data transfer speeds, reduces latency, and allows a higher number of devices to connect simultaneously without compromising performance which can bring more people online & make a bigger impact in upcoming creator economy.

- As per GSMA, 5G is poised to contribute $2.2 trillion to the global economy over the next 15 years.

- Its impact is being felt across a myriad of sectors including healthcare, automotive, and the Internet of Things (IoT).

For example, 5G is making it possible for doctors to check on patients from far away in real-time, helping self-driving cars work better, and making our cities smarter and more connected.

Anticipation of 6G :

People are already talking about 6G, the next big thing trending in IT, even though it is not expected to be ready for everyone until 2030. But the smart folks who create this stuff are already getting started on making 6G happen.

Based on what science direct and the University of Oulu have found, 6G isn’t just going to make our internet faster. It is also going to bring in cool new features that could change the way we talk and share stuff online. Imagine being able to connect in a 3D world or chat with holograms!

Investments and Strategic Planning :

Moving to 5G and beyond means we need to spend a lot of money on building the right stuff, and figuring out how it will work through research, infrastructure & development.

For businesses and people working in this field, it is important to keep up with these new changes. This helps in making smart plans and staying ahead in the online world.

Policy Frameworks and International Collaborations :

Also, laws and international collaborations with other countries will shape how 5G and 6G technologies will grow.

Right now, regulatory bodies and organizations are setting up rules to make sure this new tech can grow and work well together all over the world.

The story of 5G and what comes next is tied to the bigger picture of global digital change. It’s about making a world where solutions based on data can do well, thanks to super-fast and reliable internet. This shows the importance of never-ending improvements in tech, making sure that being connected continues to be at the heart of our modern world, supporting new tools that make our everyday lives better and help our economy grow.

Recent Updates / Breakthroughs from 5G to 6G ( 2024 Updated )

Amplification of Terahertz Waves:

Researchers at UNIST have developed a method to amplify terahertz (THz) electromagnetic waves by over 30,000 times using a new THz nano-resonator and AI-based inverse design. This advancement, detailed in ACS publication, enhances field strength, enabling ultra-precise detectors and sensors, which can significantly benefit medical diagnostics and environmental monitoring.

Ultra-High-Speed 100 Gbps Transmission:

According to NTT DOCOMO’s news release, NTT DOCOMO, NTT, NEC, and Fujitsu have developed a sub-terahertz 6G device capable of transmitting data at 100 Gbps in the 100 GHz and 300 GHz bands. This device achieved these speeds at distances up to 100 meters, which is approximately 20 times faster than current 5G networks.

China’s Advances in 6G:

As per south China morning post release, a Chinese team has developed 6G technology for hypersonic weapons, addressing communication blackout problems. Additionally, early applications for 6G are expected to be launched by 2025, with commercial rollout anticipated by 2030.

AI-Driven 6G Networks:

At MWC 2024, the AI-RAN Alliance, involving major companies like Samsung and Vivo, announced initiatives to integrate AI with 6G networks. This integration aims to optimize network performance and enable new applications, highlighting the role of AI in the evolution of 6G technology.

Careers in 5G Industry :

-

Job Growth :

Demand for 5G-related roles is surging, especially in network engineering and infrastructure development.

-

Roles :

5G Network Engineer, 5G RF Engineer, and Telecom Project Manager.

-

Employment Opportunities :

Companies like Ericsson, Huawei, and Qualcomm are at the forefront, offering numerous job opportunities in 5G technology.

Trend 3: Artificial Intelligence (AI) and Machine Learning (ML)

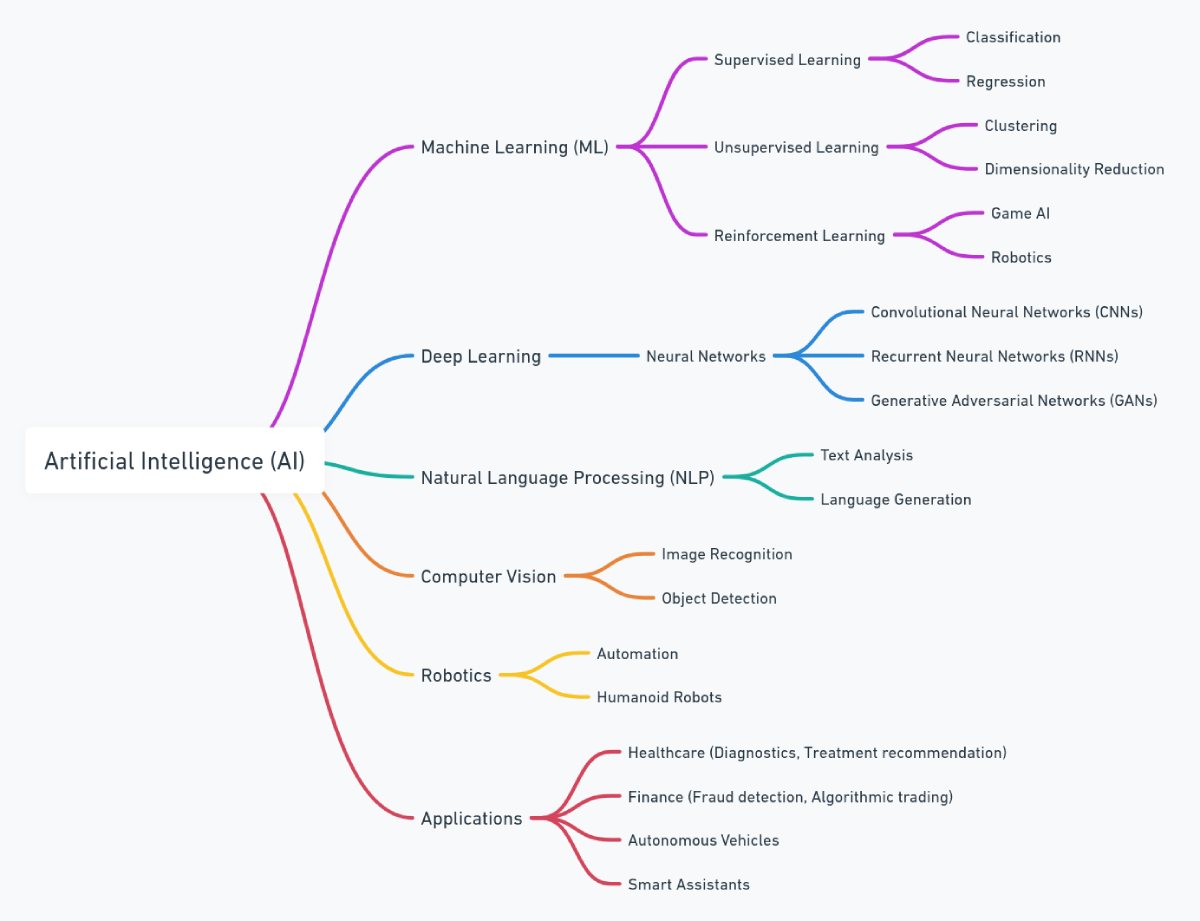

The world of Artificial Intelligence (AI) and Machine Learning (ML) keeps growing, bringing fresh new ideas to many different areas. As we explore this fascinating field, it’s important to understand the various types of AI, such as ANI, AGI, and ASI, each with its own capabilities and potential impacts.

Here’s a simple mind map of AI world for you:

At the core, AI and ML are about creating systems capable of:

- Learning

- Adapting

- Performing tasks that traditionally required human intelligence

These technologies are cool because they can find patterns in lots of data. This helps people make informed decisions and predictive analysis.

According to a report by McKinsey, AI could really help the world’s economy. By 2030, it could add about $13 trillion to the global economy, making it grow faster by about 1.2% each year. The transformative potential of AI and ML is palpable in areas like:

- Healthcare : Aiding in early disease detection and personalized treatment plans.

- Financial sector : Employing AI-driven algorithms for fraud detection, risk assessment, and customer service enhancements.

The road to getting the most out of AI and ML is all about getting better at analyzing data and having stronger computers. As NVIDIA, a key player in powering AI computations, shows that strong graphic cards and smarter Neural net designs have really helped machine learning get better.

Ethical considerations are also paramount as we advance in the AI landscape. Discussions around:

- Data privacy

- Bias mitigation

- Transparent algorithmic decision-making

are crucial to building trust and ensuring responsible AI deployment. Initiatives like the EU’s Ethics Guidelines for Trustworthy AI play a pivotal role in steering the discourse and practices towards ethical AI.

For businesses and people, learning about AI and understanding the rules around it is important. It might be a good idea to team up with an AI development company that knows a lot about AI & helps you move into the future. This is not just about techy stuff—it is also about doing what is right and following the law. The story of AI is like an ongoing adventure in the online world. As we step into 2024, it brings fresh ideas and helps us grow in a good way. It paints a picture of a society where digital tools help us in our daily lives.

Recent Updated in Artificial Intelligence ( 2024 Updated ) :

1. Apple Integrated AI at WWDC 2024

Apple introduced “Apple Intelligence” at WWDC 2024, which integrates AI across iOS 18, macOS, and other platforms. Features include smarter email categorization, enhanced language tools for writing, and AI-generated image creation. These tools aim to improve productivity and personalization while maintaining user privacy.

2. New GPT4o model with SKY voice assistant

OpenAI recently launched GPT-4o, a new AI model that allows real-time communication through voice, video, and text. It integrates these capabilities into one model, making interactions faster and more seamless. One of the standout features is the SKY voice assistant, which offers human-like voice interactions and can handle complex prompts, making conversations more natural and engaging.

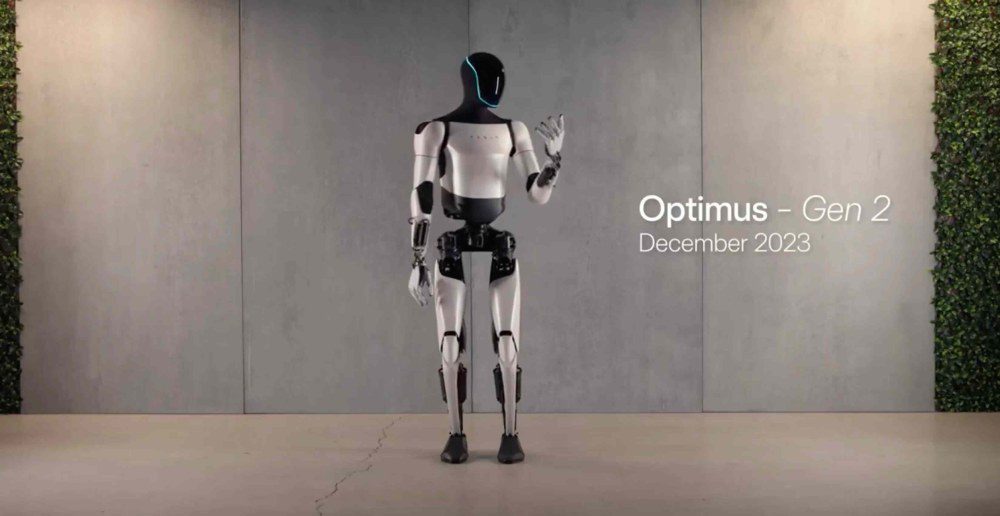

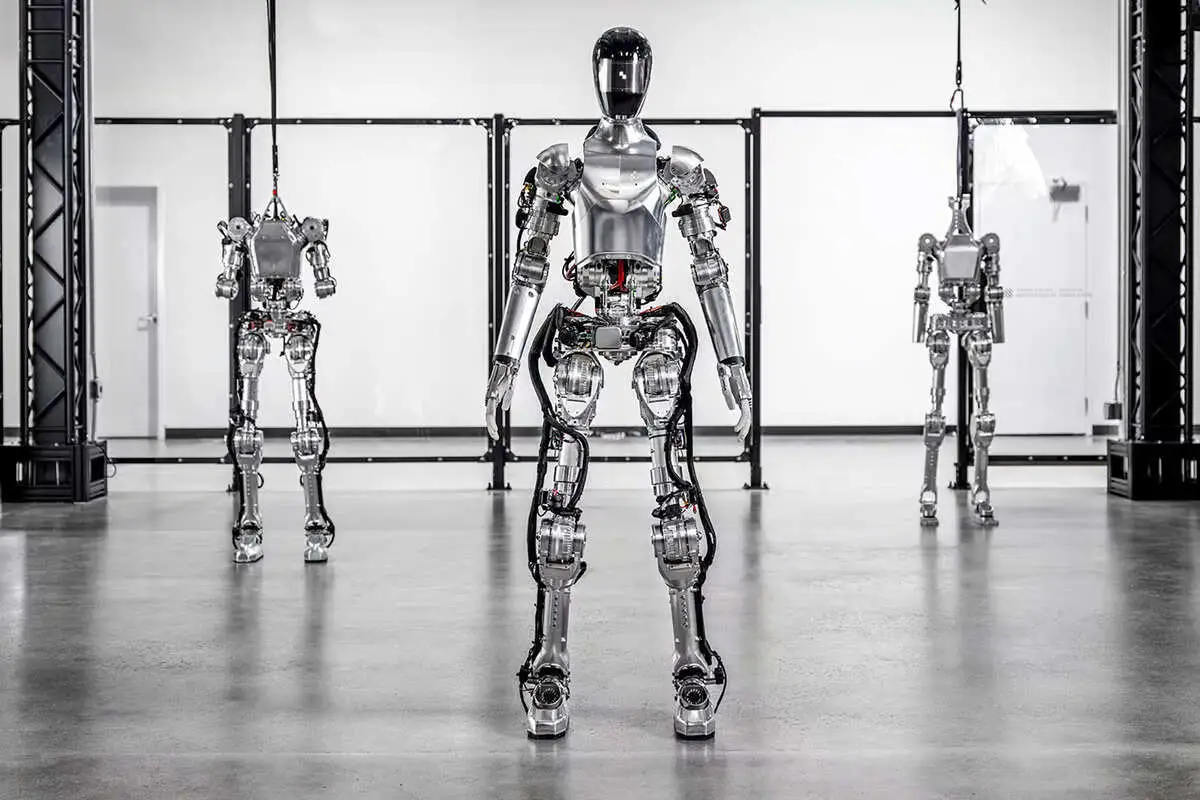

3. Humanoid Robots Tested in Real World

Tesla has officially started testing their robot : Optimus at their factories & it will cost $10K-$20K each as per CEO Elon musk in upcoming years

4. The Safety Alignment team for AGI has left openai

OpenAI recently disbanded its Safety Alignment team, known as the Superalignment team, which was focused on ensuring the safety of advanced AI systems. This decision followed the departure of key leaders, including co-founder Ilya Sutskever and safety expert Jan Leike.

5. China’s Kling to compete with Openai SORA

Chinese tech company Kuaishou has launched Kling, a text-to-video AI model designed to compete with OpenAI’s upcoming Sora. Kling can generate videos up to two minutes long in 1080p resolution at 30 frames per second.

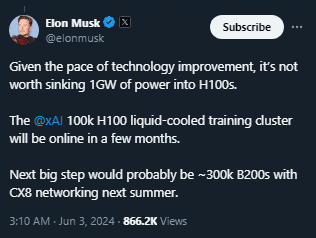

6. Elon musk compute goal to train AI models at xAI

Every second counts! Stay ahead in the IT race with Softlabs Group’s custom AI and software solutions.

Careers in AI & ML:

-

Job Growth :

The AI job market is expanding, with a 20-fold increase in job postings requiring generative AI skills as per Monster.

-

Roles :

Positions such as Data Scientist, Machine Learning Engineer, and AI Research Scientist are highly sought after.

-

Employment Opportunities :

Top AI Companies and tech giants like OPENAI, Microsoft, Google, Tesla & Anthropic continue to lead in AI & ML innovation, offering numerous job opportunities in this field.

Opinion of an AI Expert:

Here’s Sam Altman (CEO of OpenAI) talking about AI changing society on Joe Rogan podcast

Real World Example of AI: Both as a chatbot & robots

chatgpt

Tesla optimus robot

Figure AI Robot

Here are some innovative AI solutions developed by us, to solve real-world problems. Come check out!

Trend 4: Blockchain

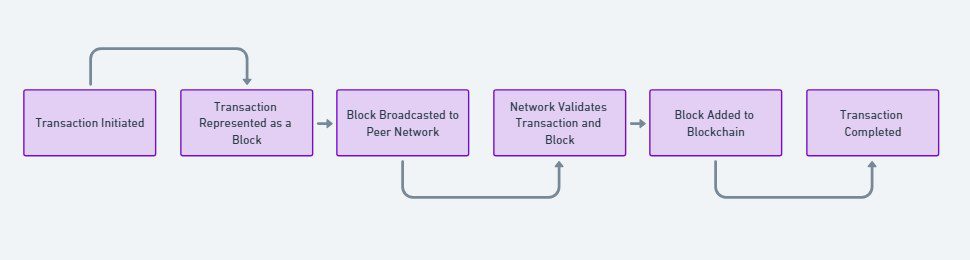

Blockchain used to be known only for things like Bitcoin, but now it is grown way beyond that, showing us, it can do a lot more in different areas. Basically, blockchain is like a shared digital notebook. Everyone has a copy, so when something gets written in the notebook, everyone’s copy gets updated. This makes it hard for someone to cheat or change what was written down, keeping things clear and safe.

Here’s a simplified flow that we made for you to understand:

What about the economic impact?

- A PwC report suggests a potential boost to global GDP by $1.76 trillion by 2030 through blockchain applications in various fields.

Applications of Blockchain:

1. Supply Chain Management:

- Real-time tracking of goods and transactions.

- Enhanced transparency and reduced fraud.

- Companies like IBM offer blockchain solutions to streamline processes.

2. Financial Sector:

- Revolutionizing transaction methods and record-keeping.

- Reduction in reliance on intermediaries, lowering costs.

- Projects like Ethereum extend capabilities through smart contracts.

3. Digital Identity Management:

- Secure and unforgeable management of digital identities.

- This is particularly crucial amid rampant data breaches and identity theft

As blockchain gets better, the talks about its rules are heating up. Governments and big groups are still working on rules about it but blockchain users & dapps builders from all over the world are still confused about government stance on blockchain.

For workers and businesses, it is important to learn about blockchain, what it can do, and the new rules around it to stay ahead in the online world. Working with a Blockchain Development Company can help with the know-how and build solutions to get ahead in it.

The story of blockchain shows our ongoing effort to make online dealings and data handling safer, clearer, and better. Heading into 2024, blockchain & web3 keeps changing, aiming for a digital world that is more open, safe, and spread out.

Recent Updates / Breakthroughs in Blockchain (2024 Updated)

AI Integration with Blockchain

Platforms like Fetch.ai are merging artificial intelligence with blockchain technology to develop decentralized, autonomous AI networks. This innovative integration boosts data analytics, improves customer experiences, and enhances fraud detection capabilities.

CBDCs and Programmable Payments

As per a report by quant, Central Bank Digital Currencies (CBDCs) are gradually being introduced, transforming payment systems by offering increased security, transparency, and efficiency. Banks like JP Morgan are implementing programmable payments with their digital currency, JPM Coin, enabling real-time treasury functionality and new digital business models.

Institutional Adoption and Regulation

Major financial institutions like BlackRock, JP Morgan, and Goldman Sachs are increasingly adopting blockchain technology. This shift is supported by new regulations in Japan, Singapore, and the EU, ensuring the security and stability of digital assets. According to a WEF report and a Quant Network perspective, these regulations are key to fostering blockchain’s integration into mainstream finance

Careers in Blockchain :

-

Job Growth :

Between October 2023 and January 2024, the number of new Blockchain-related jobs observed a negative growth rate of 8.42% as per global data but I believe with bitcoin halving in 2024 & the flushing of FTX scam from the market, the industry is set again for a clean sustainable growth

-

Roles :

Blockchain Developer, Smart Contract Engineer, Blockchain Consultant…

-

Employment Opportunities :

Companies like Consensys, Coinbase, Chainlink, Binance, Polygon & Solana Foundation are at the forefront of blockchain innovation, offering numerous job opportunities.

Expert Opinion on Blockchain Potential:

Here’s Balaji Srinivasan, a renowned blockchain enthusiast & former Coinbase CTO on how blockchain changes everything

Trend 5: Cybersecurity

n today’s digital world, data is almost like money, and that’s why cybersecurity is super important. It is all about keeping our information safe. Just like bad guys are always coming up with new tricks, cybersecurity keeps changing to protect us better from all kinds of threats.

- Cybercrime is predicted to cost the world $9.5 trillion USD in 2024 as per esentire. This big jump shows just how much we need to toughen up our online security on all digital platforms.

One of the notable advancements in cybersecurity is the adoption of AI and ML to enhance threat detection and response. Companies like Darktrace are pioneering in employing AI to detect, respond to, and mitigate cyber threats in real-time.

- The emergence of Zero Trust Architecture, which operates on the principle of ‘never trust, always verify’, is gaining traction as a holistic approach to secure organizational networks.

The National Institute of Standards and Technology (NIST) provides a framework to understand and implement this model to thwart potential cyber-attacks.

- Moreover, the escalation of remote working has accentuated the need for enhanced cybersecurity frameworks.

Setting up a system like Secure Access Service Edge (SASE) is like putting together a security guard and a speedy delivery service for your computer network. It makes sure only the right people get in, while also making data travel fast from one point to another. This setup helps businesses stay safe and work efficiently, just like what the experts at Gartner explained.

Following regulations and working together with other governments are really helping to make the internet a safer place all over the world.

Governments and institutions are continually updating cybersecurity laws and frameworks to stay ahead of potential threats.

For businesses and people who work there, it is important to keep up with the latest ways to stay safe online. This means knowing about new threats and spending money on the best safety tools to keep their digital stuff safe and their business running smoothly.

The story of keeping our online world safe is like a never-ending fight against invisible bad guys. As we move through 2023 & enter in 2024, getting better at online safety is not just about stopping threats, but about building a strong digital community. This way, both businesses and regular folks can trust the digital world to be a safe place.

Careers in Cybersecurity :

-

Job Growth :

Global cybersecurity job vacancies grew by 350 percent, from one million openings in 2013 to 3.5 million in 2021, according to Cybersecurity Ventures

-

Roles :

Cybersecurity Analyst, Information Security Manager, Security Engineer etc

-

Employment Opportunities :

Leading companies in cybersecurity like Symantec, Check Point Software Technologies, and McAfee are constantly on the lookout for skilled professionals.

Trend 6: Augmented Reality (AR) and Virtual Reality (VR)

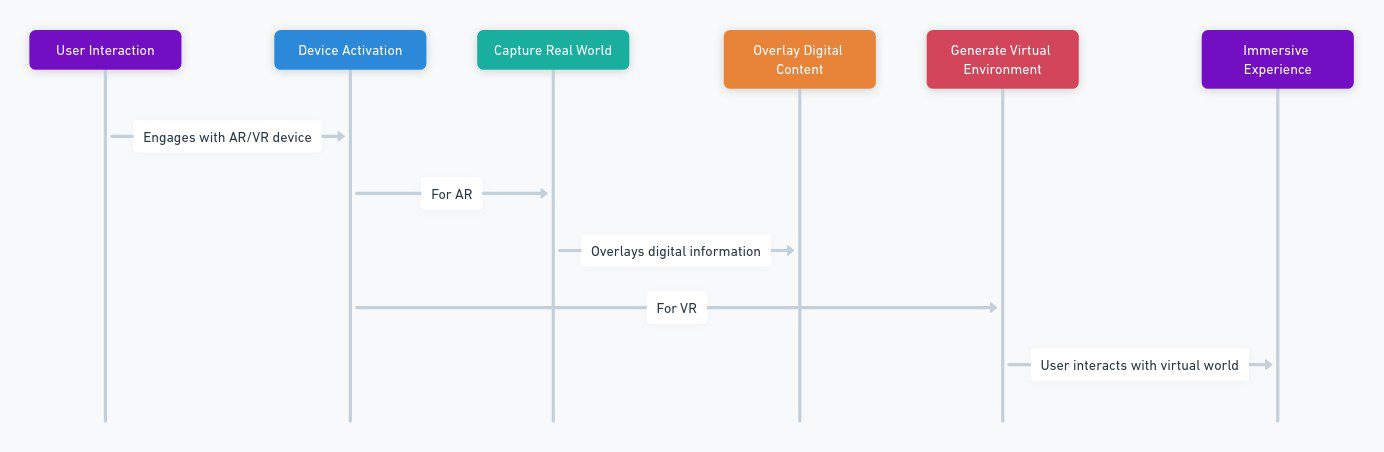

In today’s world, the digital and real worlds are blending more and more. Augmented Reality (AR) and Virtual Reality (VR) are like magic doors to fun and interactive experiences. AR puts digital stuff, like images or info, into our real world using gadgets like smartphones or special glasses. But VR takes you into a totally digital world, kind of like jumping into a video game, usually by wearing a VR headset.

Here’s a chart showing how it works :

The global AR and VR market is on a robust growth trajectory. According to a report by Statista, the market size of AR and VR is expected to reach $296.9 billion by 2024, showcasing a world ripe for digital interactivity.

AR & VR in Education:

- AR and VR offer interactive, engaging learning experiences, making complex subjects more understandable.

- Companies like ClassVR are pioneering in providing VR classroom experiences that enhance learning and retention.

AR & VR in Healthcare:

- Aid in complex surgeries through AR-guided systems.

- VR-based therapy for post-traumatic stress disorder.

- Renowned institutions like Cedars-Sinai have acknowledged the potential of VR in pain management and patient care.

AR & VR in Entertainment

- Reshaping interaction with digital content through immersive gaming and movie experiences.

- Headsets like Meta Quest are at the forefront, pushing the boundaries of virtual entertainment. Moreover, Mark Zuckerberg joined lex fridman on his podcast & both of them conducted the 1st podcast in metaverse. It shows that Meta’s recent development in photorealistic avatars is set to elevate VR communications, making virtual interactions feel incredibly real and intuitive by capturing and portraying subtle facial expressions.

AR & VR in Industrial Training, Real Estate, and Retail:

- Offering virtual tours, training simulations, and virtual try-on experiences.

As AR and VR get better, the focus is on making them easier and more comfortable to use, while also making sure they are safe and private.

For businesses and people who work with tech, getting to know what AR and VR can do is important. It is like learning to use new tools to do cool things. It is also good to have a plan on how to use these tools and to know the rules so you do not get into trouble. This way, you are ready to explore and do well in this new digital world.

The story of AR (Augmented Reality) and VR (Virtual Reality) is about blurring the lines between the online world and the real world, making way for cool new ideas and experiences. As we move through 2023 & enter 2024, AR and VR are changing how we interact with digital stuff, making everything more fun and interactive.

Recent Updates / Breakthroughs in AR & VR (2024 Updated)

OLED Tech on Silicon

OLEDoS technology marks a major advancement in display tech for AR and VR devices, using silicon-wafer-based CMOS substrates to achieve ultra-high resolutions (3000-4000 ppi), high luminance, and fast response times. As per counterpoint research, this innovation enables smaller, lighter, and more efficient AR/VR headsets.

Cloud-Based Processing for VR

As per startusinsights report, cloud-based processing is transforming VR by moving heavy computational tasks to the cloud, enabling high-quality VR experiences without expensive local hardware. This technology supports collaborative VR environments and high-fidelity applications, making VR more accessible and scalable

Volumetric VR for 3D Scenes

Volumetric VR captures 3D scenes using multiple cameras, allowing users to explore realistic and interactive environments from any perspective. This technology enhances social media, education, and entertainment by providing highly immersive 3D experiences. As per a report by StartUs Insights, it significantly improves user engagement in various fields.

Pancake Optics System for Better VR Display

A new pancake optics system has been created to enhance VR displays by increasing optical efficiency and compactness. This innovation improves image contrast and expands the spectral response, resulting in lighter, more power-efficient VR headsets. As per a phys.org report, this breakthrough could greatly influence the future design and usability of VR devices.

New Mobile platform for Creating AR Content

Blippar has launched Blippbuilder Mobile, a no-code platform for creating AR content on mobile devices. This tool democratizes AR content creation, allowing users and developers to easily create and deploy AR experiences without needing extensive technical knowledge. As per the AR news report, this innovation makes AR development more accessible to a wider audience.

Careers in AR and VR :

-

Job Growth :

Globally, it is forecasted that over 23 million jobs will be enhanced by virtual reality (VR) and augmented reality (AR) technologies by 2030 as per Statista.

-

Roles :

AR/VR Developer, AR/VR Content Creator, AR/VR Hardware Engineer…

-

Employment Opportunities :

Companies like Oculus, Sony, and Magic Leap are leading in AR/VR technology, offering numerous job opportunities.

Mark Zuckerberg on AR VR Potential :

Here’s Mark Zuckerberg, launching Demo Quest 3 – an AR & VR headset at Meta Connect 2023

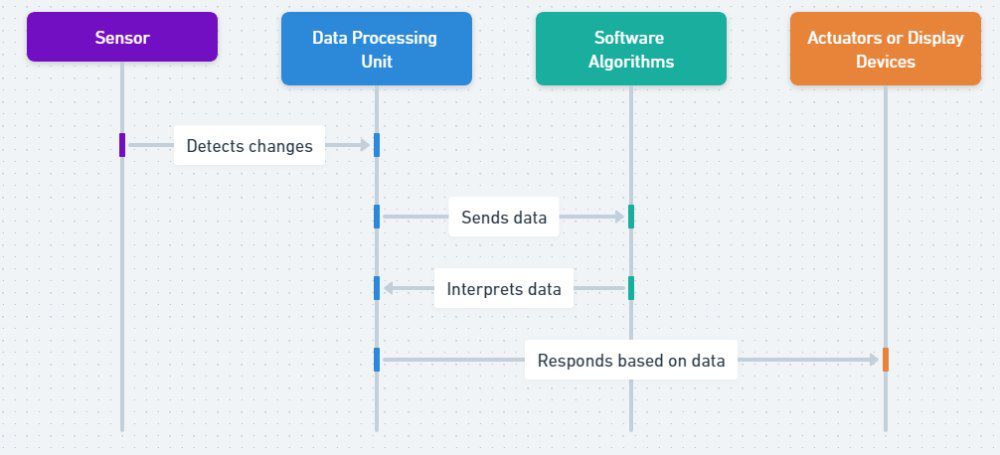

Trend 7: Internet of Things (IOT)

The story of IoT (Internet of Things) is like a new episode in the big adventure of turning everything digital. It paints a picture of a future where smart gadgets talk to each other to make things work better, spark new ideas, and make life easier. As we move through 2024, IoT is helping to create a world where everything is linked up, smarter, and works smoothly.

Growth Projection:

- A report by Statista says that by 2025, nearly 31 billion gadgets like phones and smart fridges will be able to talk to each other over the Internet. This shows how these smart gadgets are becoming a normal part of our daily lives.

Its Impact on Smart Homes:

- IoT is revolutionizing how we interact with our living spaces.

- Examples include intelligent thermostats like Nest, and smart security systems providing real-time monitoring and alerts, enhancing the comfort and security of homes.

Industrial Applications for IoT:

- IoT is the linchpin of Industry 4.0, driving operational efficiencies through predictive maintenance, real-time monitoring, and automation.

- Companies like Siemens are offering IoT-enabled industrial solutions that optimize processes and reduce operational costs.

Integration in Healthcare:

- Smart wearable devices enable continuous monitoring of patient’s health parameters.

- IoT-based systems improve the management and traceability of medical assets.

Security Concerns:

- The proliferation of IoT devices accentuates concerns about data privacy and security.

- Robust security protocols and addressing privacy issues are paramount to building trust and facilitating wider adoption of IoT technologies.

For Businesses and Professionals:

- Comprehending the potential of IoT, aligning with evolving standards, and investing in IoT security are critical steps towards harnessing the benefits of interconnected digital ecosystems.

The narrative of IoT is an unfolding chapter in the saga of digital transformation, showcasing a future where intelligent, interconnected devices enhance operational efficiency, drive innovation, and improve quality of life. As we journey through 2024, the evolution of IoT sets the stage for a more connected, intelligent, and efficient world.

Careers in Internet of Things (IoT) :

-

Job Growth :

According to Fortune Business Insights, the global Internet of Things (IoT) market is projected to grow from $662.21 billion in 2023 to $3352.97 billion by 2030, at a CAGR of 26.1%. These trends indicate a growing demand for IoT professionals across various industries

-

Roles :

IoT Developer, IoT Solutions Architect, IoT Security Specialist, System Integration Engineer, and Data Scientist.

-

Employment Opportunities :

Leading innovators like Microsoft, IBM, Amazon Web Services (AWS), and Cisco are at the forefront, providing numerous job openings.

Trend 8: Edge Computing

As the online world grows, we need faster ways to handle all the information that is being created. That is where Edge Computing comes in. Instead of sending all the data far away to a big computer centre to be sorted out, Edge Computing deals with it right there where it is created. This way, it cuts down on delay and does not hog the internet, making things run smoothly and giving us quick updates, so everything performs better.

Here’ s how it works in a simplified way :

Market Growth:

- A report by markets and markets says that the global edge computing market size is expected to grow from $53.6 billion in 2023 to $111.3 billion by 2028

Sectoral Impact:

- In sectors like manufacturing and logistics, edge computing is proving to be a catalyst for real-time analytics and predictive maintenance.

- Healthcare is another sector where edge computing is making significant inroads. For instance, Philips’ eCareManager utilizes edge computing to provide critical care analytics.

Technological Synergy:

- The symbiosis between edge computing and 5G technology is expected to further accelerate the deployment and efficiency of both technologies, creating a conducive environment for ultra-low latency applications and enhanced data processing capabilities.

Challenges:

- But, before we can get all the cool benefits from edge computing, we need to figure out some stuff. Like, how to keep our information safe, respect our privacy, and cover the costs of setting it up.

For businesses and people, getting to know how edge computing works is important. It’s like keeping up with the latest tech changes and solving any problems that come with it. This way, they can use edge computing to do things better and faster.

Edge computing is about finding faster and easier ways to work with data. As we go from 2023 to 2024, it is improving, making things quicker, working smoother, and giving us helpful info right away, which could change how we handle and make sense of data.

Careers in Edge Computing :

-

Job Growth :

As we know its going to grow till $111.3 billion by 2028. This indicates a growing demand for professionals in this field. So lookout for jobs on internet.

-

Roles :

Edge Computing Specialist, Edge Solutions Architect, Edge Network Engineer…

-

Employment Opportunities :

Leading companies like IBM, Cisco, and AWS are pioneering in edge computing, offering numerous job opportunities to cater to the growing market demands.

Trend 9: Autonomous Vehicles

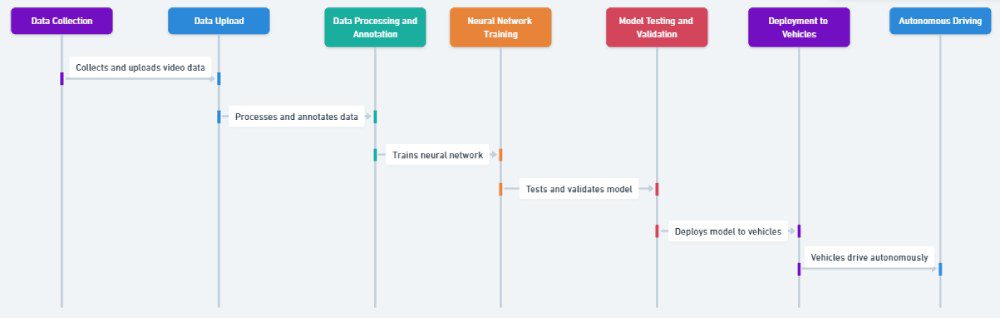

Self-driving cars, or autonomous vehicles (AVs), are a big deal in the car world. They bring together the latest tech with everyday transportation, opening the door to a new way of getting around. These smart cars use artificial intelligence, sensors, and advanced neural nets to drive on their own without a person at the wheel.

Here is a simplified version of how Tesla, a leader in self driving functions the car on neural nets:

The global AR and VR market is on a robust growth trajectory. According to a report by Statista, the market size of AR and VR is expected to reach $296.9 billion by 2024, showcasing a world ripe for digital interactivity.

Market Growth:

- According to a study by Allied Market Research, self-driving cars is projected to reach $448.6 billion by 2035, growing at a CAGR of 22.2% from 2025 to 2035. This shows they could really change the way we get around.

Safety Advancements in AV:

- Safety stands at the forefront of AV technology.

- By reducing the scope of human error, which according to the National Highway Traffic Safety Administration, is a contributing factor in 94% of crashes, autonomous vehicles promise a significant reduction in road accidents.

Environmental Considerations:

- The environmental footprint is also a consideration.

- AVs, often coupled with electric power, present a greener alternative to traditional gasoline-powered vehicles.

- Companies like Tesla are leading the charge towards eco-friendlier, autonomous mobility solutions. As per Feb 2024, tesla has released FSD 12 ( Full self driving version 12 update in the car), in this the car is self driving on the basis of AI neural nets rather than hundreds of lines of code. This is a big step in autonomy & eco-friendly solutions.

Here is a video of Tesla driving on its own:

Now , still there are a lot of problems we need to solve though. There are questions about what’s right and wrong, risks of hackers, and rules that need to be figured out. It is important to have clear laws and understand the right and wrong of letting self-driving cars make decisions on the road. These are big steps to make sure self-driving cars can safely become a part of our everyday lives.

For businesses and professionals in the automotive and tech sectors, it’s important to get the new tech that’s making self-driving cars possible. They also need to know the regulatory landscape and what people think about these cars. Talking about safety, doing the right thing, and how self-driving cars affect our planet is key to meeting what society and the law expect when it comes to this new way of getting around.

As we cruise through 2024, the roadmap towards full autonomy continues to unfurl, promising a future where transportation is safer, more efficient, and more environmentally friendly.

Recent Updates / Breakthroughs in Autonomous Driving (2024 Updated)

Tesla New Full Self Driving Update

Tesla continues to update its Full Self-Driving (FSD) software, with the latest 12.4 version removing the need for the driver to keep their hands on the wheel, known as the “wheel nag” as per the report by notateslaapp. This update aims to enhance the user experience and bring Tesla closer to achieving fully autonomous driving

Advanced Sensor Technologies

Sensor technologies have seen significant advancements, particularly with more compact and efficient lidar systems. Combining sensors like cameras, radars, and ultrasonic sensors enhances situational awareness, reduces blind spots, and boosts safety. As per the Industry Wired report, these improvements are shaping the future of autonomous vehicle technology

Robot Driving Non-Electric Car

Kento Kawaharazuka, an assistant professor in robotics at the University of Tokyo, recently published a paper detailing an autonomous driving project using the Musashi musculoskeletal humanoid robot. The robot’s advanced design allows it to mimic human movements and perform tasks like operating car pedals and steering wheels. Here’s a video driving it :

Career in Autonomous Vehicles Industry :

-

Job Growth :

The autonomous vehicle industry is experiencing rapid growth, with expectations of creating many job opportunities in the coming years, as per IDTechEx.

-

Roles :

Autonomous Vehicle Software Engineer, Vehicle System Engineer, Data Analyst, Safety Driver for Autonomous Vehicle Testing…

-

Employment Opportunities :

Companies like Tesla ( USA ) & BYD ( China ) are leading the way in autonomous vehicle technology, offering a myriad of job opportunities.

Trend 10: Sustainable Technology

- The global market for sustainable technologies is burgeoning. According to MarketsandMarkets, the smart green technology market alone is expected to reach $36.6 billion by 2025, showcasing a significant shift towards eco-conscious technological solutions.

- Renewable energy, especially solar and wind power, is helping us move towards cleaner ways to get electricity. Companies like Tesla are at the forefront of this change, not only with electric cars but also with innovative solar power solutions.

- Tesla recently launched a new product called Powerwall 3 in the US, with plans to release it worldwide soon. It’s a big battery that stores solar energy. During a power outage, it uses the stored energy to power your home and electric car, and recharges itself with sunlight.

- The Powerwall is gaining popularity both in the US and globally, showing that more people are getting interested in smart, sustainable technology to meet their energy needs.

- In the realm of software, energy-efficient algorithms and data centers are gaining traction. Companies like Google are striving towards achieving zero net carbon emissions through various sustainable practices including optimizing data center operations for energy efficiency.

- E-waste management is another crucial facet of sustainable technology. Initiatives like Apple’s recycling program are aimed at reducing electronic waste and promoting the recycling of devices, aligning with the broader goal of a circular economy.

- Moreover, sustainable technology is fostering smarter, greener urban planning through smart city solutions that optimize resource usage, reduce waste, and enhance the quality of life for residents.

- The integration of AI and big data analytics in monitoring and managing environmental resources is further propelling the ability to make data-driven decisions for sustainable practices.

For businesses and professionals, embracing sustainable technology is not just an environmental imperative but also an avenue for innovation, cost savings, and alignment with evolving regulatory and societal expectations.

The narrative of sustainable technology is a compelling testament to the symbiotic potential between innovation and sustainability. As we navigate through 2023, the adoption and advancement of sustainable technology are poised to play a pivotal role in steering the global community towards a more eco-conscious and sustainable future.

Career in Sustainable Technology

-

Job Growth :

The demand for sustainable technology roles has surged, with a notable growth in green jobs, as per a report by the International Renewable Energy Agency (IRENA).

-

Roles :

Sustainability Analyst, Renewable Energy Project Manager, Environmental Engineer

-

Employment Opportunities :

Companies like Tesla, Siemens, and General Electric are at the forefront of sustainable technology, offering numerous job opportunities in this sector.

Trend 11: Robotic Process Automation (RPA)

Today’s businesses are always trying to work faster and better. Robotic Process Automation (RPA) is a handy tool for this. It helps companies use robots to do boring, repeated jobs, so people have more time for important and creative work.

According to a report by Grand View Research, the worldwide market for robots that can do repetitive tasks was worth $2.3 billion in 2022. This market is expected to grow fast, at a rate of 39.9% each year, from 2023 to 2030.

RPA finds its utility across a plethora of sectors:

- In finance, it’s streamlining processes like invoice processing and reconciliation. Platforms like UiPath are facilitating seamless integration of RPA, enhancing operational efficiency.

- In healthcare, RPA is aiding in claims processing, appointment scheduling, and data management, thereby enhancing the overall operational efficiency and patient experience.

Furthermore, RPA (Robotic Process Automation) is like a stepping stone to fancier stuff like AI (Artificial Intelligence) and Machine Learning. It helps organize data in a way that makes it easy to study and learn from. This teamwork is creating paths for smarter, self-learning automated solutions.

However, there are challenges such as:

-

Process selection

-

Change management

-

And cybersecurity concerns

are pivotal aspects that need navigating to unlock the full potential of RPA.

For businesses and professionals, getting to know how Robotic Process Automation (RPA) works, the hurdles in making it work, and how it gets along with another new tech is key. It helps in making the most out of automatic ways of doing things while keeping problems at bay.

The story of RPA (Robotic Process Automation) is all about making work faster, easier, and better. As we move through 2024, RPA keeps improving, especially when combined with smarter tech like AI (Artificial Intelligence). This combo is set to change the way we work, bringing about smarter automation and helping organizations get more done.

Career in Robotic Process Automation :

-

Job Growth :

According to Solutions, the RPA market has shown outstanding growth in recent years, leading to a notable demand for RPA roles globally. In India alone, there are 6,000 vacancies for various RPA job roles.

-

Roles :

RPA Developer, RPA Engineer, RPA Technical Lead, RPA Solutions Senior Developer, RPA Consultant, among others.

-

Employment Opportunities :

Major companies like Cognizant, TCS, Infosys, Accenture, Blue Prism, UiPath, and Automation Anywhere are leveraging RPA, creating numerous job opportunities globally.

Trend 12: Spatial Computing

Spatial Computing takes us beyond the usual flat screen, letting us interact with a mix of the digital and real world. It includes cool tech like Augmented Reality (AR) and Virtual Reality (VR), sensors, and super-fast wireless networks like 5G.

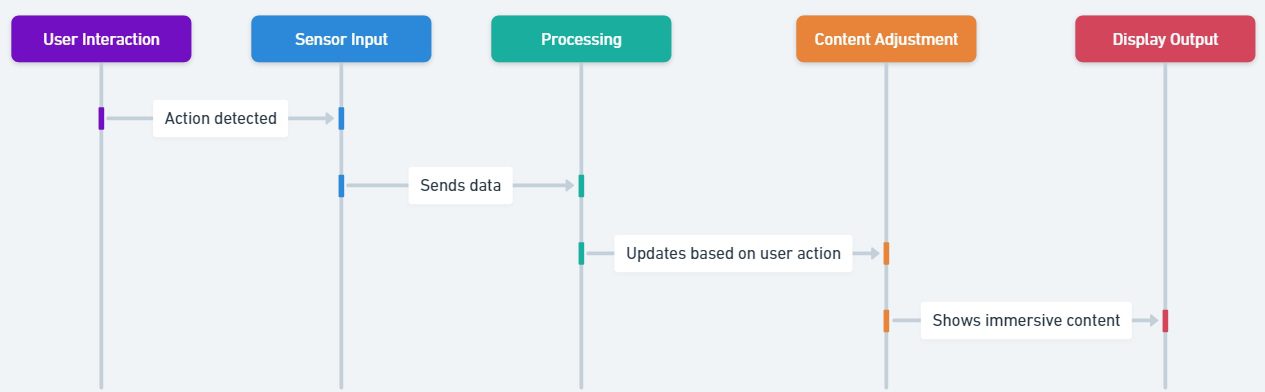

Here a simple version of how typical interactions in a spatial computing system looks like :

An article on Globenewswire, based on a Market Us study, says the worldwide market for this kind of computing could hit $620.2 Billion by 2032.

Industries leveraging Spatial Computing include:

- Real Estate: for virtual tours.

- Retail: for AR shopping experiences.

Companies like Apple & Magic Leap are pioneering spatial computing solutions, creating interaction with digital stuff feel more natural and exciting, almost like mixing our real world with the digital one.

Moreover, Spatial Computing is paving the way for more efficient and safe autonomous vehicle operations by providing:

-

Real-time 3D mapping,

-

Advanced navigational assistance.

However, challenges such as:

-

Data privacy

-

High costs

-

The need for robust infrastructure

are hurdles on the path to widespread adoption.

As we step into 2024, embracing Spatial Computing could be synonymous with stepping into a future of endless digital-physical interactive possibilities, offering a new lens through which we perceive and interact with the world around us.

Careers in Spatial Computing :

-

Job Growth :

With the spatial computing market projected to grow from USD 120.5 billion in 2023 to USD 620.2 billion by 2032,, the job market in this field is anticipated to expand significantly, particularly in tech-centric regions like India.

-

Roles :

Spatial Computing Specialist, Augmented Reality Developer, Virtual Reality Engineer, and Mixed Reality Designer are among the emerging positions.

-

Employment Opportunities :

Companies at the forefront of spatial computing innovation such as Apple & Meta are likely to offer numerous job opportunities, with India & USA being key players in the tech industry.

Real-World Example of Spatial Computing with Apple Vision Pro

Trend 13: Voice Assistants and Natural Language Processing (NLP)

Voice Assistants and Natural Language Processing (NLP) are changing the way we talk to machines. Now, machines can understand and respond to our everyday language, making them easier and friendlier to use.

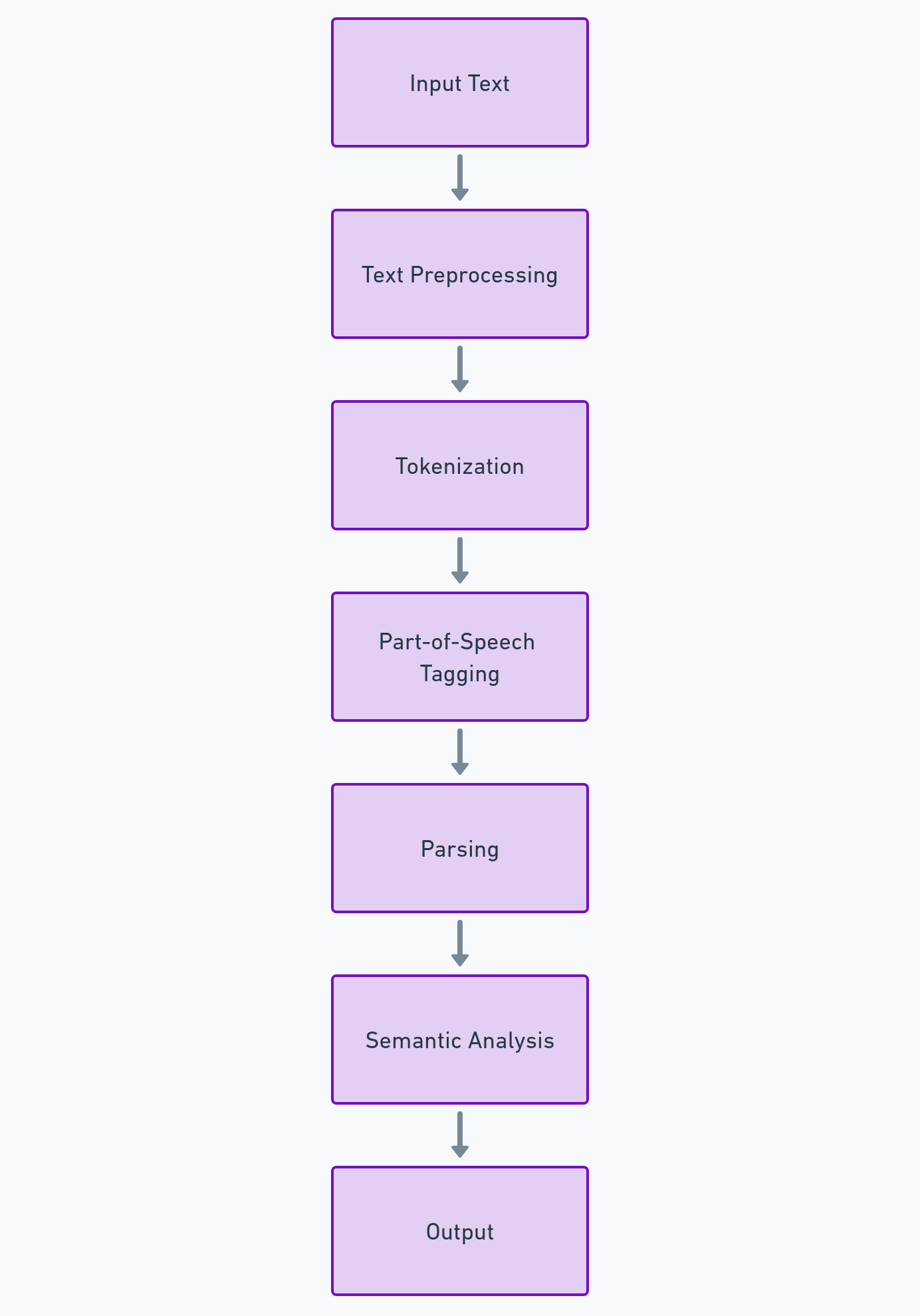

In a simplified way, this is how NLP works:

The worldwide market for NLP (Natural Language Processing, a type of artificial intelligence that helps computers understand human language) is getting bigger. It was worth about 19.68 billion dollars in 2022, and it’s expected to grow to 112.28 billion dollars by 2030, growing at a rate of 24.6% each year, according to a report by Fortune Business Insights. Big companies like Amazon and Google are at the forefront of this, with their smart helpers, Alexa and Google Assistant, helping to drive this growth.

Industries utilizing Voice Assistants and NLP include:

- Healthcare: for appointment scheduling and medication reminders.

- Automotive: for safer, hands-free controls.

However, challenges such as:

-

Privacy concerns,

-

Linguistic diversity remains

As 2024 unfolds, the synergy between Voice Assistants and NLP is poised to deepen, driving a more conversational, accessible digital realm.

Careers in Voice & NLP Tech :

-

Job Growth :

he substantial growth in the NLP market, from USD 19.68 billion in 2022 to a projected USD 24.10 billion in 2023 as per a report by Fortune Business Insights, implies a growing demand for professionals skilled in Voice Assistants and NLP technologies.

-

Roles :

NLP Engineer, Voice User Interface (VUI) Designer, Speech Recognition Engineer, among others, are roles that would see a demand surge owing to the market growth.

-

Employment Opportunities :

Companies leading in AI and NLP technologies like Google, OpenAI, Amazon, and IBM offer myriad employment opportunities for individuals skilled in Voice Assistants and NLP technologies.

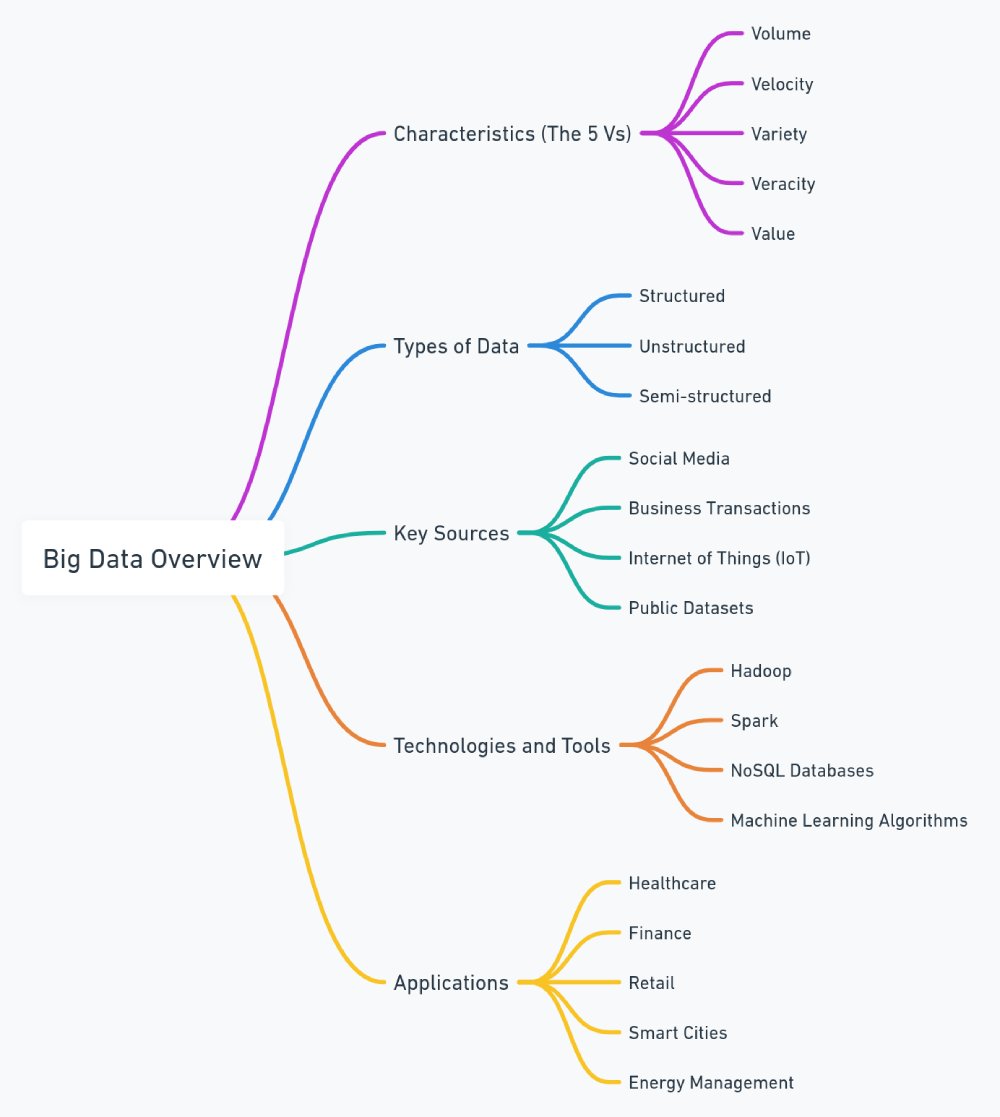

Trend 14: Big Data & Analytics

Big Data and Analytics are like guides helping us through a flood of digital information, turning plain data into useful tips. Just like a report from fortune business insights says, the worldwide market for Big Data and Analytics is set to grow big time, hitting $745.15 billion by 2030, and it’s expected to grow even more after that.

Here’s a simple overview of Big Data:

An article on Globenewswire, based on a Market Us study, says the worldwide market for this kind of computing could hit $620.2 Billion by 2032.

Industries harnessing Big Data and Analytics include:

- Retail

- Healthcare,

- Finance: for better decision-making and operational efficiency.

For instance, Tableau enables businesses to visualize data for better insights.

However, challenges like:

-

Data privacy

-

The skills gap persists

As we venture into 2024, Big Data and Analytics continue to be the linchpin for successful digital transformation, catalyzing a data-driven culture across the enterprise spectrum.

Careers in Big Data & Analytics :

-

Job Growth :

The reliance on big data and predictive analytics to drive business growth has heightened the demand for data analytics careers, as per a report by 365 Data Science.

-

Roles :

Data Analyst, Business Intelligence Analyst, Data Engineer, among others, are in demand.

-

Employment Opportunities :

Companies like PayPal, Diverse Lynx, and Insight Global are among the top hirers in the data analytics field.

Trend 15: Smart Cities

Smart Cities are great examples of how technology and city living can work together. They are expected to be worth a whopping $3.84 trillion by 2029, says Mordor Intelligence. They use cool tech like IoT (Internet of Things), AI (Artificial Intelligence), and Big Data to make city life better.

Cities worldwide are adopting smart solutions. For instance:

- Singapore’s Smart Nation initiative aims at excellence in urban living through tech.

Challenges like:

-

Data privacy

-

nfrastructure costs persist

Yet, as we step into 2024, the blueprint of Smart Cities unfolds promisingly, heralding a new era of sustainable, efficient urban living.

Careers in Building Smart Cities :

-

Job Growth :

The Smart Cities Market size is estimated to be USD 1.36 trillion in this year, which indicates a rising demand for professionals in this domain, as per a report by mordor intelligence

-

Roles :

Urban Planners, Smart City Consultants, IoT Specialists, and AI/ML Engineers are among the roles seeing a demand surge.

-

Employment Opportunities :

Key players like Cisco Systems and Siemens AG are spearheading smart city projects, offering numerous job opportunities.

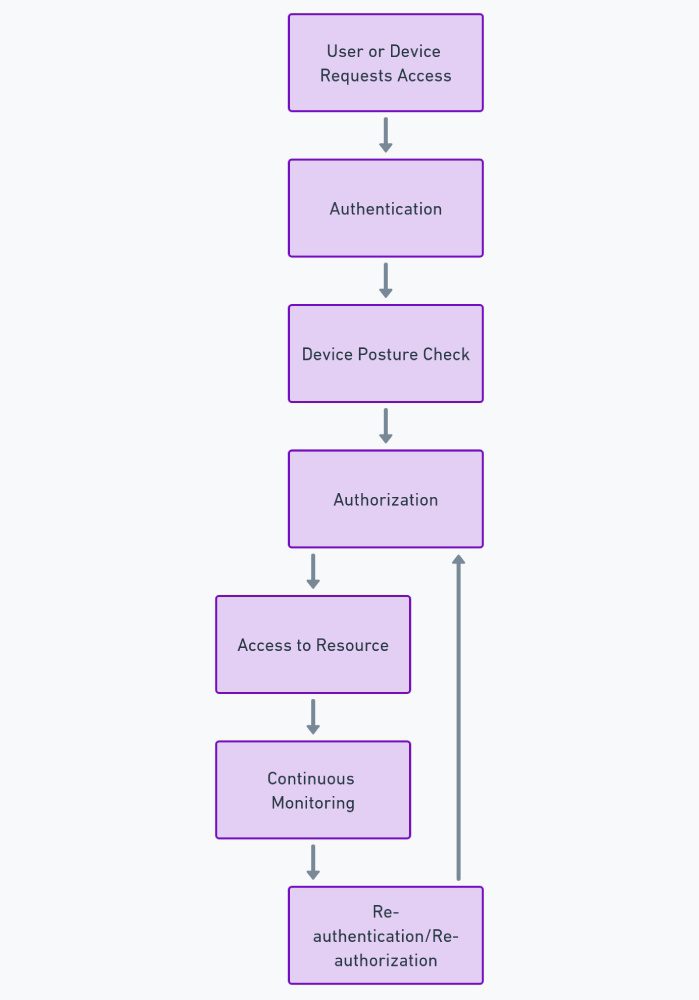

Trend 16: Zero Trust Architecture

Zero Trust Architecture (ZTA) is a cybersecurity paradigm centered on the principle that trust is never assumed and must be continuously verified. According to MarketsandMarkets, as cyber threats grow in sophistication, the global market for Zero Trust Security is expected to reach $51.6 billion by 2026.

Here is a simplified version of how it works :

Companies offering comprehensive ZTA solutions include:

However, challenges like:

-

Implementation complexity,

-

Legacy system compatibility exist.

As 2024 unfolds, ZTA is poised to be a cornerstone in modern cybersecurity strategies, ensuring robust protection in an increasingly interconnected world.

Careers in ZTA Tech :

-

Job Growth :

Well according to the previous numbers by MarketsandMarkets, $51.6 billion growth by 2026, indicates a growing demand for professionals skilled in this architecture.

-

Roles :

Zero Trust Security Architect, Network Security Engineer, and Cybersecurity Analyst are among the in-demand positions.

-

Employment Opportunities :

With the rise in zero trust security initiatives, companies like Palo Alto Networks, Cisco, and Akamai Technologies are among the top employers.

Trend 17: Digital Twins

Digital Twins are virtual replicas of physical entities, providing a real-time look into the lifecycle and performance of products or processes. The market is blossoming, with a projection to reach $48.2 billion by 2026, according to MarketsandMarkets.

Industries like manufacturing and urban planning are leveraging Digital Twins for optimized operations. Challenges like data security remain, yet the benefits are compelling.

As 2024 progresses, Digital Twins stand as a beacon of operational excellence and innovation, paving the way for enhanced real-world outcomes through digital insights.

Careers in Digital Twins Tech :

-

Job Growth :

As per business research company, the global digital twin market is forecasted to grow to $56.66 billion in 2027 at a CAGR of 39.0%, suggesting a rising demand for skilled professionals in this domain.

-

Roles :

Digital Twin Engineer, Simulation Engineer, and IoT Architect are among the sought-after roles.

-

Employment Opportunities :

Companies like Siemens, IBM, and GE Digital are at the forefront of digital twin technology, offering numerous job opportunities.

Trend 18: Low Code/No Code Development

Low Code/No Code Development platforms are democratizing software development, making it accessible beyond just the tech-savvy individuals. With the global low code development platform market projected to grow from USD 13.89 billion in 2021 to USD 94.75 billion by 2028, exhibiting a CAGR of 31.6% during the forecast period according to a report by Fortune Business Insights.

Platforms like Mendix or Microsoft Power Apps are empowering individuals to create apps with minimal coding knowledge.

However, issues like security and scalability are concerns. As we venture into 2024, these platforms are anticipated to mature, addressing existing challenges and further democratizing software development.

Careers in Digital Twins Tech :

-

Job Growth :

The surge in Low Code/No Code platforms adoption, enabling faster application development as per Quixy, hints at a growing job market for developers and citizen developers alike.

-

Roles :

ow Code/No Code Developer, Application Developer, and Citizen Developer.

-

Employment Opportunities :

Companies embracing Low Code/No Code platforms offer numerous job opportunities, with industries like IT, finance, and healthcare leading the adoption.

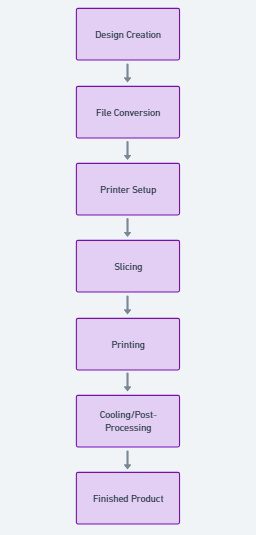

Trend 19: 3D Printing

3D Printing, also known as additive manufacturing, fabricates objects layer by layer from digital models.

This is how 3D printing works :

This technology is revolutionizing manufacturing by enabling rapid prototyping and production. Valued at $26.51 billion in 2018, it’s anticipated to soar to $33.74 billion by 2024, marking a CAGR of 26.75%, as reported by Envision Intelligence.

Key players like Stratasys are leading, though hurdles like material limitations linger.

As 2024 unfolds, 3D Printing continues to redefine traditional manufacturing paradigms, spurring innovation across sectors.

Recent Updates / Breakthroughs in 3D Printing (2024 Updated)

High-Temperature Super Alloy:

NASA and The Ohio State University have developed GRX-810, a high-temperature alloy known for its strength and ductility. This alloy can withstand extreme environments, making it ideal for aerospace applications like airplane and spacecraft parts. This breakthrough is crucial for advancing aerospace technology by providing durable materials, as detailed in a scitechdaily report.

Microscale 3D Printing:

As per sciencedaily, researchers at Stanford University have developed a high-speed microscale 3D printing technique called roll-to-roll 3D printing. This method enables the creation of shape-specific particles with high resolution and greatly accelerates the production of microscale objects. This advancement is crucial for rapid and precise manufacturing of components in electronics, medicine, and materials science.

Bioprinting Human Tissue:

The National Institutes of Health have made strides in 3D bioprinting human tissues, using hydrogels and biological materials to create complex structures like blood vessels with high precision. This technology could transform medical research and treatment, providing better models for studying diseases and testing drugs, as noted by Kristy Derr from NIH’s NCATS lab.

Careers in 3D Printing :

-

Job Growth :

As per by previous data, the 3D printing market is projected to reach USD 33.74 billion by 2024, indicating a rising demand for professionals in this domain.

-

Roles :

3D Printing Technician, 3D Modeler, and Additive Manufacturing Engineer.

-

Employment Opportunities :

Companies leading in 3D printing technologies offer numerous job opportunities, with industries like automotive, aerospace, and healthcare being major adopters.

Trend 20: Extended Reality

Extended Reality (XR) encapsulates Virtual Reality (VR), Augmented Reality (AR), and Mixed Reality (MR), forging a bridge between digital and physical worlds.

Here’s a simplified version of how it works :

The global XR market is on a growth trajectory, predicted to hit $472.39 billion by 2028, as per mordorintelligence. XR is making strides in education, healthcare, and retail.

Firms like Magic Leap are pioneering this space. Challenges like high costs and privacy issues persist, yet the advancements in 2023 are promising.

XR is evolving to offer enriched user experiences, blending digital and physical interactions seamlessly across various domains.

Recent Updates / Breakthroughs in Extended Reality (2024 Updated)

Samsung XR Headset ‘Infinite’:

According to a report from 9to5Google (via JoongAng), Samsung plans to launch its XR headset, “Infinite,” in late 2024. Developed with Google and Qualcomm, it aims to rival Apple’s Vision Pro and will debut alongside the Galaxy Z Flip 6. Initial production will be limited to 30,000 units to ensure high quality.

Generative AI in XR:

Generative AI is transforming extended reality (XR) by enabling real-time, personalized content creation. XR platforms now adapt dynamically, crafting unique virtual environments based on user interactions. This enhances user engagement and immersion, as noted in Axis XR’s report.

Multi-Sensory XR Technologies:

The integration of multi-sensory technology in XR is advancing to include haptic feedback, spatial audio, and even olfactory stimuli, creating a more immersive and realistic user experience. Users can now feel, hear, and smell virtual environments, significantly enhancing the depth and realism of XR experiences. As per the Axis XR report, these advancements are set to revolutionize how we interact with virtual worlds.

Careers in Extending Reality Tech :

-

Job Growth :

Well from USD 105.58 billion in 2023 to USD 472.39 billion by 2028, suggests a significant demand for professionals in this domain which is a great indication for anyone looking to make career in this field of tech.

-

Roles :

XR Developer, AR/VR Engineer, and 3D Modeler are among the sought-after positions.

-

Employment Opportunities :

Companies innovating in XR like Oculus, Apple vision pro, Magic Leap, and Microsoft offer numerous job opportunities.

How New Trends in Information Technology will Evolve :

As we keep moving forward in the digital world, the cool stuff coming up in 2024 – 2025 will help guide how we use and accept new tech. Here is a glimpse into the potential future:

Trend Evolution:

- 5G & Beyond: The spread of 5G technology is going to kickstart the development of 6G, bringing us into a time where super-fast and dependable internet connections could make the online and real-world experiences feel more connected.

- AI & ML: When AI comes together with cool stuff like Virtual Reality and Blockchain, it can create new areas of tech. This mix helps make instant decisions and lets many kinds of businesses run things on autopilot.

Emerging Technologies:

- The melding of Quantum Computing with AI and ML might unlock unprecedented computational capabilities, potentially revolutionizing fields like cryptography, material science, and complex systems simulation.

Long-term Impact:

- As Smart Cities and Digital Twins keep getting better, city life could change a lot. Cities might become smarter, run more smoothly, and respond better to what people need.

- More places are starting to use Zero Trust Architecture, which is a fancy way of saying they do not trust anything on their networks from the get-go to keep their data safe. This is a big step towards making the online world safer from hackers and other threats.

Global Economies & Job Markets:

- The ripple effects of these trends could lead to more jobs in both new and existing areas, helping create a lively and strong job market ready to handle the changing demands of our digital world.

The tapestry of emerging trends in information technology from 2024 to 2025 hints at a future brimming with promise and challenges. The collective endeavor to harness these trends could propel us into a new epoch of digital innovation, fundamentally reshaping how we interact with the world around us.

How to Adapt to Changing IT trends? For students, working professionals & business owners

For Students:

-

- Enroll in online courses or attend workshops related to emerging IT trends.

- Follow reputable tech blogs, podcasts, and YouTube channels to stay updated.

- Participate in hackathons, coding competitions, or open-source projects to gain hands-on experience.

- Create personal or collaborative projects that incorporate new technologies.

- Join tech communities, both online and offline, to meet professionals and other students.

- Attend tech meetups, webinars, and conferences to learn from experts and build connections.

- Find mentors in your desired field who can guide you on the latest trends and career paths.

- Utilize platforms like LinkedIn to connect with industry professionals.

For Working Professionals:

-

- Take advantage of professional development opportunities offered by your employer.

- Pursue certifications in emerging technologies related to your field.

- Volunteer for projects at work that allow you to use new technologies or methodologies.

- Share your insights and experiences with new technologies through blogs or at team meetings.

- Engage with industry communities to exchange knowledge on latest IT trends.

- Attend professional seminars, workshops, and networking events.

- Subscribe to industry publications, and follow relevant online forums and social media groups

For Business Owners:

-

- Provide training programs for your employees on new technologies and methodologies.

- Encourage a culture of continuous learning and improvement within your organization.

- Evaluate and implement tools and technologies that can improve efficiency and productivity.

- Experiment with new technologies on smaller projects before rolling them out widely.

- Partner with tech companies or consult with IT experts to leverage their knowledge.

- Collaborate with other businesses to explore new technologies together.

- Regularly review the impact of new technologies on your business operations.

- Seek feedback from employees and customers on technology implementations.

CONCLUSION

As we wrap up our journey through the IT trends of 2024, it is thrilling to think about the tech advancements on the horizon. From faster 5G connections, and smart AI, to stronger cybersecurity, each trend opens new digital doors. I hope this peek into what is coming has been insightful for you. There are many career opportunities in these growing fields. So, here is to a bright future for you in these exciting industries. Your next big career move might just be a page away!

As you have stayed this far with us, this might interest you!

As a leading AI and Software Development company, Softlabs Group can help you navigate these emerging technologies to stay ahead of the curve.