Enterprise AI projects increasingly require systems that understand both images and text together – reading a medical scan alongside a patient report, extracting data from a scanned invoice, or running visual question answering on product catalogues. Standard computer vision tools handle images. Standard NLP handles text. But bridging both into one coherent reasoning system requires a specialized discipline: vision-language model development. Finding the right vision-language model development companies in India demands more than a vendor who offers “AI services.”

The five vision-language model development companies in India listed below were identified through specific capability verification – each must explicitly address VLMs, multimodal AI, or the intersection of computer vision and language models, not generic AI development claims. Softlabs Group leads the list, followed by four firms with documented multimodal and VLM-related delivery.

Each company has been assessed for technical depth in vision-language or multimodal AI architectures, verifiable service pages or case studies, and confirmed India headquarters. This is not a directory scrape – companies that offer only isolated computer vision or NLP services were excluded.

Quick Navigation

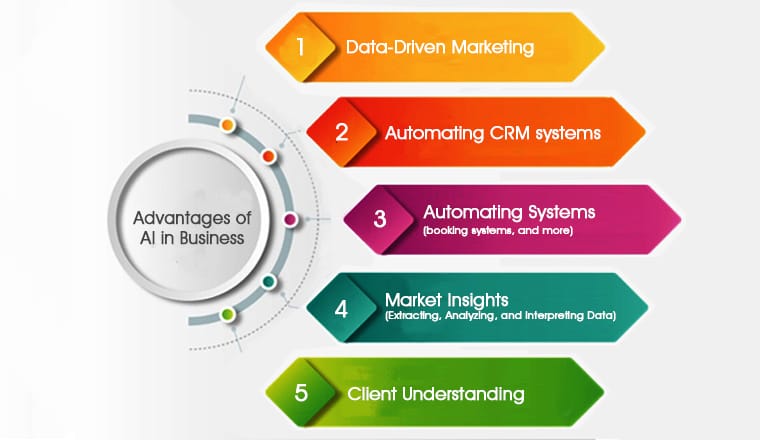

What Makes Vision-Language Model Development Important for Indian Businesses?

Vision-language model development enables Indian enterprises to build AI systems that process images and text in combination – unlocking automation that neither computer vision nor NLP alone can achieve. The demand for vision-language model development companies in India has grown sharply as enterprises move beyond isolated CV or NLP solutions.

Manufacturing firms use VLMs to detect defects and generate natural language inspection reports simultaneously. Healthcare organizations apply them to correlate scan images with clinical notes. Logistics companies extract structured data from handwritten or printed documents using VLM-powered OCR pipelines. The common thread: real-world data rarely comes in a single modality, and systems that cannot handle both vision and language leave significant automation potential unused.

India’s AI development ecosystem has matured considerably in this space. Research institutions and deep-tech firms are building on open-weight multimodal architectures like LLaVA, InternVL, and Qwen-VL, adapting them for domain-specific Indian business contexts including regulatory documents, regional language labels, and industry-specific imagery. According to Grand View Research, the global multimodal AI market is projected to grow at a compound annual rate exceeding 35% through 2030 – and Indian development partners are increasingly positioned to serve both domestic and global enterprise demand.

Which Companies in India Build Vision-Language Model Development Solutions?

The five vision-language model development companies in India below have been verified through multi-source validation: LinkedIn headcount confirmation, live proof link verification, topic-specific capability assessment, and geographic HQ confirmation.

1. Softlabs Group

★ Verified ListingCore Expertise in Vision-Language Model Development: Softlabs Group combines 22+ years of custom AI and software development with a deep technical stack spanning computer vision (OpenCV, PyTorch, TensorFlow, Keras) and large language model frameworks (LangChain, Python, NLP tooling). This cross-domain foundation is precisely what vision-language model development demands – teams that can architect inference pipelines bridging visual encoders with language decoders, not teams that treat computer vision and NLP as separate silos.

Softlabs Group’s computer vision deployments include real-time PPE detection on industrial sites, AI-powered inventory tracking using visual recognition, and construction monitoring systems – all requiring tightly coupled image processing and contextual output generation. These production deployments reflect the same architectural discipline that VLM systems require: controlled inference, real-time performance on domain-specific imagery, and structured output that downstream systems can consume. The team’s LLM and generative AI practice adds the language reasoning layer, enabling Softlabs to build complete vision-language pipelines from image ingestion through to natural language response or structured extraction.

Contact: business@softlabsgroup.com | +91 7021649439

Explore Our AI Development Capabilities →2. Carnot Research

★ Verified ListingCarnot Research is the strongest specialist qualifier on this list for VLM work. Founded by IIT Delhi professors, the firm explicitly names vision-language models as a core research and delivery capability on its website – combining VLM integration for visual reasoning with OCR pipelines that digitize and interpret scanned and handwritten documents. Their multimodal ingestion work spans PDFs, web content, YouTube, and scanned materials, all processed through unified language-vision architectures.

The firm’s academic roots translate into genuine technical depth. Clients include OPPO, NSG, BCG, and JICA – organizations with demanding AI requirements. Carnot holds CMMI Level 3 certification and ISO 27001:2022 accreditation, and won the Transport Stack Open Innovation Challenge. For organizations that need research-grade VLM capability from a small, focused team rather than a large generalist firm, Carnot Research represents a distinctive option.

3. Hyperlink InfoSystem

★ Verified ListingHyperlink InfoSystem operates a dedicated multimodal AI development practice covering the architectural techniques central to vision-language systems: cross-modal representation learning, attention fusion networks, transformer-based approaches, and contrastive learning. Their service page describes scalable inference architectures that correlate and synthesize information across text, images, audio, and video – positioning them as a capable partner for organizations building production VLM pipelines at scale.

Founded in 2011, Hyperlink brings significant delivery scale to complex AI projects – 4,200+ applications delivered across their full service portfolio. Their ISO 9001:2015 certification signals process maturity for enterprise-grade engagements. The combination of size, structured process, and specific multimodal AI capability makes Hyperlink a practical choice for organizations that need a larger delivery team alongside genuine vision-language expertise.

4. Associative

★ Verified ListingAssociative runs a focused multimodal LLM development practice, building systems that process text and images simultaneously. Their documented approach integrates Computer Vision (OpenCV) directly into LLM workflows – enabling chatbots and automation tools that understand visual context alongside text input. This is the precise technical pattern underlying most practical VLM deployments: a language model extended with a visual encoder to reason across both modalities. Their stack includes LangChain, Ollama, Keras, PyTorch, and TensorFlow.

Founded in 2021, Associative is a younger firm but holds an Adobe Bronze Solution Partner designation – indicating enterprise-level partnership credentials despite small team size. Their positioning as a specialist multimodal LLM studio means engagements are likely to involve senior technical involvement rather than junior delivery layers. For organizations that prefer a focused specialist over a large generalist firm, Associative offers strong technical alignment with vision-language model development requirements.

5. A3Logics

★ Verified ListingA3Logics lists Multimodal AI as a named development service within their AI practice, combining text, images, audio, and video data for enterprise decision-making. Their computer vision and OCR capabilities – covering document digitization, facial recognition, and defect detection – sit alongside NLP services built on CNNs, RNNs, and Transformer architectures. The convergence of these two practices is what qualifies them for vision-language model development work: they build at the intersection of visual processing and language understanding.

With 21+ years of experience and a 201-500 person team, A3Logics brings substantial delivery capacity. Their confirmed India entity operates as A3Logics India, headquartered in Gurugram. For organizations with complex, multi-workstream AI projects that require coordinated CV and NLP delivery alongside other software engineering, A3Logics offers the team depth and process maturity to manage that complexity.

Quick Reference: Vision-Language Model Development Providers by Specialisation

Softlabs Group

Location: Mumbai, Maharashtra

Key Specialty: Custom AI development with computer vision and LLM stack for production VLM pipelines

Carnot Research

Location: New Delhi, Delhi

Key Specialty: Deep-tech VLM research and delivery, founded by IIT Delhi professors, explicit VLM + OCR visual reasoning

Hyperlink InfoSystem

Location: Ahmedabad, Gujarat

Key Specialty: Dedicated multimodal AI service with cross-modal fusion, attention networks, and large delivery scale

Associative

Location: Pune, Maharashtra

Key Specialty: Specialist multimodal LLM development integrating Computer Vision into language model workflows

A3Logics

Location: Gurugram, Haryana

Key Specialty: Multimodal AI combining CV, OCR, and NLP for document and defect detection use cases

Ready to discuss your vision-language model development requirements with our team?

Talk to Softlabs GroupHow Do You Verify a Company’s Vision-Language Model Development Capabilities?

Evaluate vision-language model development companies in India based on documented multimodal architecture work, specific framework expertise, and verifiable production deployments – not generic AI capability claims.

The companies listed above were verified through rigorous multi-source validation across five dimensions:

Topic-Specific Capability Verification: Each company must explicitly reference VLMs, multimodal AI, or the combination of computer vision and language models on their service pages. “We do AI” does not qualify. Firms that offer only isolated computer vision or only NLP were excluded.

Live Proof Link Validation: Every proof link was manually checked. No dead URLs, no redirects to generic homepages. Where companies listed only a domain, direct searches were run for specific service or solution pages before any link was included.

Geographic HQ Confirmation: India headquarters verified via company websites, LinkedIn, and MCA records. Satellite offices and “India-origin teams” without confirmed Indian HQ were not counted.

Headcount Verification: LinkedIn company page data only. Where headcount is not publicly available, the entry reads “not publicly disclosed” – no estimates were used.

Framework and Architecture Assessment: For vision-language model development specifically, companies were assessed for mentions of relevant architectures and tools – transformers, contrastive learning, attention fusion, cross-modal representation, OpenCV integration with LLMs, or named VLM model families. Buzzword-only claims without architecture specifics were treated as weak qualifiers.

Questions to ask shortlisted vendors:

- Which VLM architectures have you deployed in production – LLaVA, InternVL, CLIP-based systems, or custom builds?

- Can you describe a specific use case where you integrated a visual encoder with a language decoder for a client?

- How do you handle inference latency for VLM systems where real-time response is required?

- What is your approach to fine-tuning a base VLM on domain-specific imagery – for example, industrial defect images or medical scans?

- How do you manage the data pipeline for multimodal training – image preprocessing, tokenization, and alignment?

What’s Happening in Vision-Language Model Development Right Now?

Vision-language model development has shifted from closed proprietary systems to a rich open-weight ecosystem, dramatically lowering the barrier for custom enterprise deployment. For vision-language model development companies in India, this shift has opened significant opportunity to serve both domestic and global clients using locally deployed, private infrastructure.

The release of models like LLaVA-1.6, InternVL2, Qwen-VL, and Phi-3-Vision over the past 12-18 months has given Indian development teams high-quality open-weight VLM bases to fine-tune for specific industry domains – without dependency on GPT-4V API costs or data privacy constraints. This shift is significant for enterprise buyers: it means custom VLM solutions can now be deployed on private infrastructure with full data control.

Indian AI labs and development firms are increasingly applying these models to document understanding use cases – extracting structured data from mixed text-image documents like invoices, shipping forms, and regulatory filings. The agentic AI development trend has accelerated this: VLMs are now being embedded as perception layers within larger agentic systems, where an agent uses a VLM to interpret visual inputs before reasoning and acting.

On the hardware side, NVIDIA’s Blackwell architecture has made VLM inference substantially more cost-efficient at scale, improving the economics of production deployment. Indian cloud providers and colocation facilities have begun offering Blackwell-tier compute access, which means locally hosted VLM inference is increasingly viable for mid-market enterprises.

What Should You Expect During Vision-Language Model Development Implementation?

Implementation of a production VLM system typically spans 10-20 weeks for a custom solution, with complexity varying significantly based on whether you use a pre-trained base model or require domain-specific fine-tuning. Leading vision-language model development companies in India follow a structured phased approach to manage this complexity.

Phase Breakdown:

- Discovery and scoping: 2-3 weeks – defining the input modalities, expected outputs, latency requirements, and deployment environment

- Data preparation: 2-4 weeks – curating image-text pairs for fine-tuning or evaluation, cleaning and preprocessing domain-specific imagery

- Model selection and fine-tuning: 3-5 weeks – selecting a base VLM architecture, fine-tuning on domain data, iterating on evaluation benchmarks

- Integration and inference pipeline: 2-4 weeks – connecting the VLM to downstream systems, optimizing for inference speed, building the API layer

- Testing and deployment: 1-2 weeks – load testing, edge case evaluation, production deployment and monitoring setup

Common challenges include data scarcity for domain-specific fine-tuning – most enterprises have proprietary imagery but limited labeled text-image pairs for training. Experienced vision-language model development companies address this through synthetic data generation, few-shot prompting approaches, and transfer learning from related domains. Inference latency is another consideration: VLMs are computationally heavier than text-only LLMs, and production deployments require careful batching and hardware selection to meet response time requirements.

Organizations deploying VLMs for document extraction or visual question answering consistently report accuracy improvements over traditional OCR or rule-based systems – particularly on mixed-format documents and domain-specific imagery where training data is available. The implementation investment is justified by reduced manual processing, higher extraction accuracy, and the ability to automate workflows that previously required human visual interpretation.

What Influences Vision-Language Model Development Costs in India?

Vision-language model development costs in India depend on model architecture choice, fine-tuning requirements, and deployment infrastructure, with pricing competitive globally. Engaging vision-language model development companies in India typically offers 40-60% cost advantage over equivalent US or European firms for comparable technical capability.

Key cost factors include:

- Base model selection: Using an open-weight VLM (LLaVA, InternVL) is more cost-efficient than building from scratch. Fine-tuning a base model costs significantly less than pretraining.

- Fine-tuning data requirements: Curating and labeling domain-specific image-text pairs is often the largest cost driver for specialized VLM deployments.

- Inference infrastructure: VLMs require GPU compute for inference. Private deployment (on-premise or private cloud) involves hardware costs; API-based deployment involves ongoing inference fees.

- Integration complexity: Connecting a VLM to existing enterprise systems – ERP, document management, quality control platforms – adds engineering scope.

- Latency requirements: Real-time VLM inference for use cases like live defect detection requires heavier hardware investment than batch-processing document extraction.

Indian development partners offer competitive rates relative to US or European VLM development firms, while maintaining access to the same open-weight model families and GPU infrastructure. Engaging multiple firms from this list for scoped proposals – with a clearly defined use case, data availability, and deployment environment – will produce accurate estimates. Well-scoped projects are consistently delivered more predictably than open-ended exploration engagements.

Frequently Asked Questions About Vision-Language Model Development in India

What is a vision-language model and how does it differ from standard computer vision?

A vision-language model (VLM) is an AI system that processes both images and text together, enabling tasks like visual question answering, image captioning, and document understanding where the model must reason across both modalities simultaneously. Standard computer vision models classify or detect objects in images but produce structured outputs like labels or bounding boxes – they do not generate or reason with language. VLMs bridge this gap, allowing natural language interaction with visual data. Examples include GPT-4V, LLaVA, and CLIP-based architectures.

Which industries in India benefit most from vision-language model development?

Manufacturing uses VLMs for defect detection with natural language reporting – a quality inspector can query a visual feed in plain language. Healthcare applies them to correlate medical imagery with clinical text for diagnostic support. Logistics and trade firms use VLMs to extract structured data from mixed-format shipping documents, invoices, and bills of lading. Insurance companies apply them to process claim images alongside policy text. Any industry with high volumes of mixed image-and-text documents is a strong VLM use case candidate.

Can vision-language models be deployed on private infrastructure in India?

Yes – the availability of open-weight VLM architectures like LLaVA, InternVL, and Qwen-VL means organizations can deploy fully private VLM systems on their own cloud or on-premise infrastructure. This is increasingly common for enterprises with data privacy or regulatory requirements that prevent sending visual data to external API providers. Indian vision-language model development companies with private LLM deployment experience are well positioned to support this architecture.

How much domain-specific data do I need to fine-tune a VLM for my industry?

This varies by task and base model quality, but practical fine-tuning for domain-specific applications typically requires several hundred to a few thousand labeled image-text pairs – far less than training from scratch. For document extraction tasks with consistent layouts, even smaller datasets can produce good results using few-shot prompting or parameter-efficient fine-tuning techniques like LoRA. A qualified vision-language model development company will assess your existing data assets during the discovery phase and recommend the most efficient fine-tuning approach given what you have.

How do I choose between a large multimodal AI firm and a specialist VLM studio?

Large firms offer delivery scale and structured project management – useful for complex multi-workstream projects or when VLM development is one component of a broader AI transformation. Specialist studios like Carnot Research offer deeper technical involvement and research-grade architecture decisions, which matters when your use case is novel or requires genuine model innovation. For most production VLM projects, the deciding factors are architecture specificity (can they describe exactly how they would approach your use case), proof of prior multimodal work, and team continuity on your project.

Conclusion: Choosing the Right Vision-Language Model Development Partner in India

The five vision-language model development companies in India listed above represent verified providers across a spectrum of team sizes and technical approaches – from the deep-tech research orientation of Carnot Research to the delivery scale of Hyperlink InfoSystem and the enterprise AI breadth of Softlabs Group. Each was included based on documented multimodal or VLM-specific capability, not generic AI positioning.

The open-weight VLM ecosystem is maturing rapidly. Organizations that begin custom VLM development now – with domain-specific fine-tuning and private deployment – are building a durable competitive advantage over those waiting for the technology to stabilize further. Indian development partners offer the combination of technical capability and cost-competitive delivery that makes this investment accessible at enterprise scale.

Whether your requirement is document extraction, visual question answering, manufacturing quality control, or a novel multimodal application, the companies listed above have the technical foundation to build it. Engage at least two or three with a well-scoped brief before selecting a partner.

Build Your Vision-Language Model Solution with Softlabs Group

Softlabs Group specializes in custom AI development tailored to your data architecture, integration requirements, and deployment environment. With 22+ years of enterprise software delivery, a deep computer vision practice, and full LLM/generative AI capability, Softlabs has the technical foundation to architect and deliver production-grade vision-language model systems.

Whether you need a complete VLM pipeline, domain-specific fine-tuning, or want to embed a vision-language model into existing workflows, our AI-assisted development approach delivers quality solutions 2-3x faster than traditional methods.